Technical Breakdown: How AI Agents Ignore 40 Years of Security Progress

Now Playing

Technical Breakdown: How AI Agents Ignore 40 Years of Security Progress

Transcript

361 segments

Welcome to today's video on the security

nightmare inherent to AI agents.

This is going to be kind of technical.

So if you're not a technical person or you want

an on technical version of this information

to give to a non-technical person in your life,

I have a greatly simplified version of this

video on my main channel and I've linked that

video below.

The last few weeks, everyone has been all abuzz

about AI agents.

Microsoft declared 2026 the year of the agent.

There was a recent tweet from a senior

developer at Google praising Claude Code that

went viral.

The Claude Code team has been releasing a bunch

of "how we use Claude Code agents ourselves"

posts.

It's just been a thing.

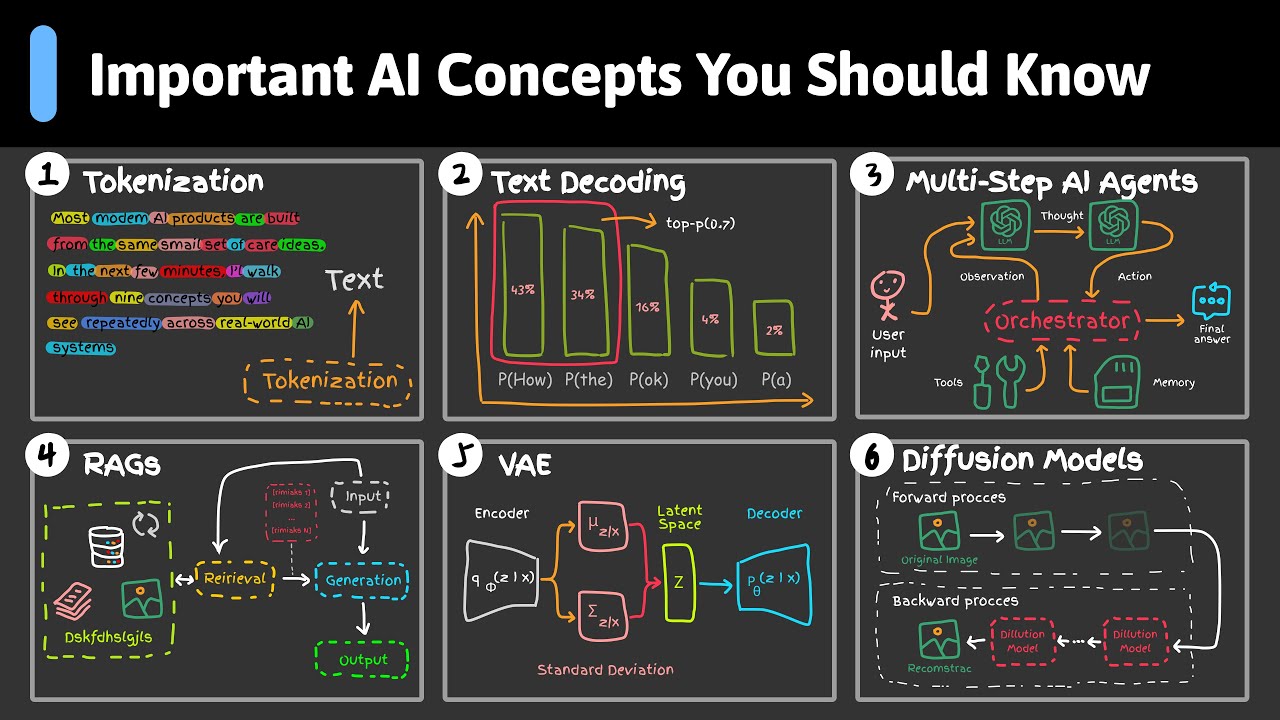

So let's talk briefly about the technology that

underlies virtually all modern computers,

which is called the "Von Newmann architecture."

This design is incredibly flexible and it

allows for easier construction of computer

hardware

than some alternative designs, but it contains

what has been referred to as the "original sin"

of computer security, which is that the code

the computer is supposed to be executing

and the data the computer is keeping in memory

are both stored in the same memory in the

same way and the CPU has no distinguishing

between instructions that it's supposed to

be following and the instructions that came

from malicious data acquired from an untrusted

source that really shouldn't be executed ever.

This original sin has led to pretty much every

remote code execution vulnerability since

the history of computers and as well as a lot

of other security weaknesses that have

plagued our industry over the decades.

There have been numerous attempts to build on

top of this architecture to try to mitigate

this problem, it can't be fixed, but it can be

made much much safer and harder for attackers

to exploit.

One of these mitigations built into modern

programming languages like Go or Rust, those

languages built in type safety to prevent data

of one type from being interpreted as

a different type.

They have strict built in bounds checking to

try to prevent overflows.

They have ownership and concurrency primitives

to try to prevent race conditions.

There are other features to help with this like

data execution prevention, which marks

pages and memories being non-executable.

There's address space layout randomization,

which means that attackers won't be able

to predict where code will be in memory to jump

to it, which makes it a lot harder for

them to know what to try to overwrite.

There are stack canaries, which are special

values placed on the stack that are verified

prior to executions so that the execution will

be stopped if the stack gets overwritten.

But I want to make sure you understand, those

mitigations, as sophisticated as they are and

as much work has gone into them over the last

60 years, are just mitigations, not fixes.

We still have frequent remote code execution

vulnerabilities being exploited in the wild.

People across the entire computing industry

have been working tirelessly for decades to

try to reduce the risk of bad things happening,

and yet our best security minds, even after

more than a half century of work, haven't been

able to alleviate this problem.

They've just managed to make it mostly tolerable

for most people most of the time.

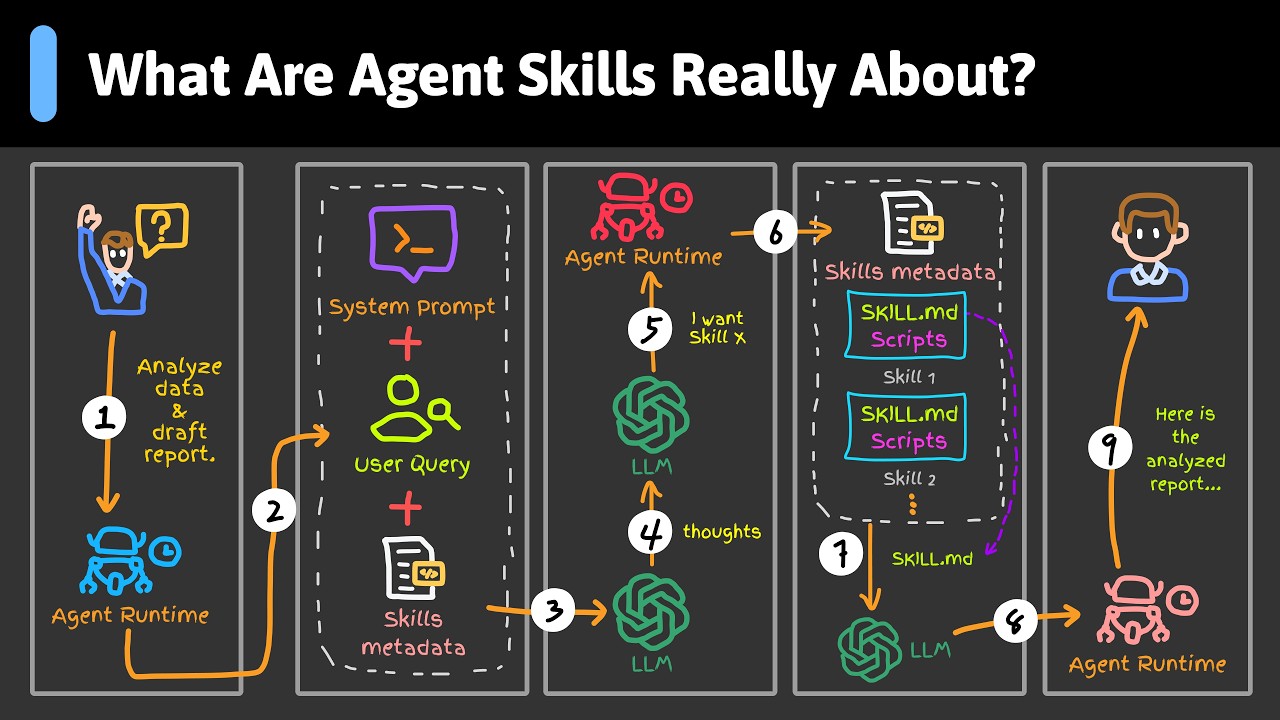

Enter the AI companies who, in their infinite

wisdom, and I mean that in the most sarcastic

way possible

in case that wasn't clear, decided that the Von

Neumann architecture's lack of memory

safety was far, far too secure and structured

for what they had in mind, said that the

security industry "hold my beer" and then

implemented an architecture that not only makes

no distinction between instructions and data,

but requires the code and the data to be

combined into a single embedding matrix that

neither contains nor retains any information

about what started out as a prompt, what was

additional context and what was previously

output, so that during neural network

processing at the core of the architecture

there's literally

no difference between the way the instructions

and the data are treated, and they're all

run through one after the other.

To be clear, this design knowingly exempts

itself from all of the security advances since

the 1980s, and then it takes the initial

original sin of computing and it deliberately

makes

it even worse by erasing even the tiny

insufficient amount of distinction there used

to be between

code and data.

The way L.L.M.'s work, and this is not an L.L.M.

internals video, so I'm not going

to get too deep, but the L.L.M.'s take the

prompt and they run it through a bunch of

matrix math to decide what the next token

should be, and then it depends on that token

to what it operated on last time, or as that

concatenation through again to select the

next token and a piece of steps over and over.

When things get complicated is when there's not

only a prompt in the output, but a bunch

of other contexts as well.

So example, when your prompt says 'summarize'

this web page, that web page gets grabbed

from the internet and added to the context it

has run through the matrix operator, along

with the prompt.

When that happens, there's no distinction

between what started out as a prompt and what

is the context it's operating on, and things

get scary when that web page or email or

whatever

other content is looking at, that the L.L.M. is

summarizing or otherwise operating one

came from a source that isn't trusted, because

if that content contains instructions that

may be interpreted by the L.L.M., then the L.L.M.

has no inherent way to know the difference

between the prompt it was asked to follow in a

prompt-like language embedded in the content

it's processing.

When the context the L.L.M. is processing

contains prompt-sounding language that the L.L.M.

might execute, we call that a prompt injection.

And when the prompt being injected was pulled

in, not directly because the user gave it to

the L.L.M. themselves, but as a result of some

operation that the L.L.M. took, like

grabbing a web page, that's called an indirect

prompt injection.

And you know how untrusted content gets pulled

into the current context and combined with

the prompt that the user wants to have executed?

AI agents who can fetch web pages and pull

them in on demand, operate on other untrusted

data like emails or code dependencies. And

how do malicious prompt hidden in content, pull

in from untrusted sources, manage to

harm the user? Again, AI agents, who can leak

the user's private information to the internet,

write malware into the user's disks to delete

and encrypt the user's files to facilitate

a ransomware attack.

Basically, anything that the agent has the

power to do, a malicious prompt can force

the agent to use to act against you for benefit

of the hacker.

And now, knowing full well that this is the

security situation they built into the

technology

that they're stacked on, and even having been

forced to admit buried in paragraph 10

of a long, unpleasant jargon-heavy blog post,

that they expect this problem to be unsolved

for years,

The AI vendors like OpenAI are pushing ahead

with trying to get the public to adopt AI

agents and AI-enabled browsers with embedded

agents in them.

This tech knowingly and deliberately pulls

content in from third-party sites, visited

by the browser or accessed by the agent, and

combines that untrusted third-party content

with the agent's previous instructions in such

a way that the underlying tech has no

way of knowing which words turned vectors came

from which kind of source.

I've lived through a nightmare like this before.

In the days of the early web, when attackers

were discovering what the new browsers and

browser features like JavaScript allowed them

to get away with, and how they could

use that to steal data and money from users of

those browsers, and steal data and money

they did, lots of it for years.

I've seen what happens when someone pushes an

insecure architecture on the world before.

In the 1990s, the bad guys created a thing we

called "malvertizing," where an advertisement

gets included on a website and injects malware

onto unsuspecting users' computers when they

visit inside sites just the New York Times,

believe it or not.

Since then, a lot of infrastructure has been

created to detect ad content that might be

malicious and might take malicious actions and

prevent them from being spread through

the ad networks, and yet, the malvertizing

still exists.

Here's an article from a couple of weeks ago

describing a malvertizing scene found in

the wild, although it is much, much less common

than it used to be.

But verifying the integrity of ads in a web

page context is fairly well understood at

this point, and it has pretty clear warning

flags that you can look for.

JavaScript codes pretty much have semicolons in

it, so you can look for those, likewise,

HTML tags have angle brackets, aka less than

and greater than signs, you can look for those.

That way, you can see where you need to pay

more attention.

It's, I'm oversimplifying, but there are

markers that you can look at.

But there's no easy indications like that for

LLM prompt injection.

It's much harder to detect, since the malicious

content is just made of ordinary language

phrases.

As more and more people start using agents, the

malware makers are going to have a field

day coming up with various ways to steal users'

data and infect their machines.

How many times have you heard warnings about

how clicking on links can infect your computer?

Well, with indirect prompt injection, an agentic

web and skilled malware creators,

no clicking will be necessary.

This is unsafe by design, and they're pushing

it anyway.

I've put links to a bunch of different exploits

that have already been found below.

I predict that the next few years will be more

and more exports found, and the AI companies

will just try to patch them up, and then the

attackers will figure out how to work around

the patches and go find more.

So let's talk about some defenses that articles

claim will help.

So here's an article from Google.

It lists these defenses: prompt injection content

classifiers, security thought reinforcement,

mark down sanitation and suspicious URL

reduction, user confirmation frameworks, and

end user

security mitigation notifications.

So let's go through these one at a time.

Prompt injection classifiers means trying to

find phrases in content that might be malicious.

Good luck with that.

I've already explained how much harder it is to

detect bad prompts than bad JavaScript.

And history from watching how attackers have

exploited buffer overflow attacks tells us

that the bad guys will find ways around that

over and over again.

Security thought reinforcement means telling

the AI not to let itself get tricked by bad

content.

If you could trust the AI not to be tricked by

bad content, then we wouldn't be here

talking about this at all.

So good luck with that one too.

Markdown sanitation and suspicious URL

reduction is just a specific type of content

classifier.

User confirmation framework means putting pop-ups

in the workflow to tell the user c"lick here

if it's OK."

First off, this is just putting the blame on

the user.

And besides that, the attackers will be working

on tricking the AI to think it doesn't need

to ask the user.

And besides that, users just tend to stop

paying attention after all those "are-you-sure?"

dialogue boxes and just click them.

End user security mitigation notifications is

also just making the user responsible for

getting hacked.

So here's OpenAI's recommendations.

Quote, limit logged in access were possible,

carefully review confirmation requests, and

give agents explicit instructions when possible,

which is blame the user, blame the user, and

blame the user.

They just don't have any good way of preventing

this, but they're not going to let that stop

them from pushing it on every user they can.

There's another fundamental computing issue at

play here, which is called the halting problem.

I assume if you're watching this, you're

familiar with the halting problem, but to

refresh

your memory, it's not possible for one program

to predict whether or not a particular set

of inputs will cause another program to halt or

not.

Likewise, prompt injection is not a problem

you can use AI to fix. Asking one AI to read

through potentially malicious content before

that content gets given to another AI just won't

help, because the first AI is also vulnerable

to the same kind of prompt injection attacks

that malicious content might contain.

Now there are specific versions of this problem

that you and I as programmers have to deal

with that normal users do not, which is

malicious prompt injection hidden in code,

specifically

hidden in open source libraries that our code

pulls in.

As your Claud Code or whatever reached through

your whole code base, including whatever

dependencies

your code pulls in, Claud Code is vulnerable to

any sufficiently bad prompts embedded in

the code comments or even in the read me files.

It's a huge problem.

So what I do, and I made a video talking about

my setup, I'll put a link here.

I have a dedicated machine that I run my code

agents on.

It's an Intel based Mac mini that was my

desktop once upon a time.

I removed Mac OS completely put Linux on it,

and I use QEMU to run virtual machine, and

I run each AI agent in its own virtual machine.

I don't give agents my GitHub credentials or

any other credentials, I have all the agents

right to local clones of GitHub repositories,

and then I grab the code that the agents

generated

to review it manually, and then I push whatever

up to GitHub that I want from my machine.

And if anything goes wrong with the agent, I

can just revert the virtual machine back

to the last known good state.

It's a pain in the ass, no doubt, but it's not

nearly as much of a pain in the ass as

cleaning up a machine after it gets hacked,

trust me, I had to do that many times.

I'm sure I will be talking about this more.

This is going to be haunting us for years, but

I'm going to wrap this video up now.

I could probably keep complaining about this

for hours, but I want to get this published.

So until next time, thanks for watching, let's

be careful out there.

Interactive Summary

Ask follow-up questions or revisit key timestamps.

This video delves into the critical security vulnerabilities inherent in AI agents, tracing the issue back to the "original sin" of the Von Neumann architecture where code and data are stored indistinguishably. The speaker explains how Large Language Models (LLMs) exacerbate this problem by merging instructions and context into a single embedding matrix, leading to risks like indirect prompt injection. After critiquing industry-standard mitigations as largely ineffective or user-blaming, the video provides a technical guide on using isolated virtual machines to safely run AI agents in a development environment.

Suggested questions

5 ready-made promptsRecently Distilled

Videos recently processed by our community