The Early Days of Anthropic & How 21 of 22 VCs Rejected It | The Four Bottlenecks in AI | Anj Midha

Now Playing

The Early Days of Anthropic & How 21 of 22 VCs Rejected It | The Four Bottlenecks in AI | Anj Midha

Transcript

2084 segments

AI alignment, don't get me wrong, is

hard, but not the hardest problem. Human

alignment is really the problem right

now. Our guest today is the most

prominent AI investor in the ecosystem,

an Midhar. Why is he the most prominent?

Three reasons. Number one, he's one of

the founding investors of Anthropic.

Number two, he led AI investments for

Andre Horus where he made investments in

Black Forest Labs, Mistral, Sesame among

others. And then third and finally today

he's the founder of AMP where he

provides compute and invests in the

world's best AI companies. If we don't

secure frontier model inference or what

I call state-of-the-art inference behind

a coordinated Iron Dome, I don't think

we have a sustainable shot at staying at

the frontier over the next decade.

There's no saturation in superconductor

discovery at all.

>> Ready to go.

and I am so looking forward to this

dude. I have stalked the [ __ ] out of you

for the last three or four days. I spoke

to Bing Gordon. I had a catch up with

Bing before this. Very nice to speak to

him. Uh so thank you so much for joining

me today, dude.

>> Thanks for having me. It's too long. It

only took us what, eight years, nine

years? I forget when it was.

>> I was 12 when we last did it. Yeah.

Well, 12 in startup land is 25, right?

So,

>> dude, I'm confused. Help me out. I had

Damis on the show the other day from

DeepMind. He was like, "Yeah, I'm not

sure if we're seeing scaling laws, but

we are definitely seeing slightly

diminishing like return/performance as

we scale." So, potentially, are we

getting to a stage where increased

compute is no longer leading to

increased performance?

>> Oh, no. Absolutely not. No, that's

that's not true at all. in in certain

domains that are well explored like

coding for example yes there's an

increasing amount of compute required to

get an incremental gain in some eval

that's super saturated but if you said

what about material science you know I'm

sitting here at periodic labs office

this is my incubate like the my latest

in incubation is called periodic labs I

spend 3 days a week here in in Mando

Park we have a 30,000t facility where we

have LLMs that then predict new

materials new superc conductors We then

have robots synthesize those new

materials and then we have we have

physical machines like X-ray defraction

machines validate whether those

materials have the properties that were

predicted by the LLMs and then we pipe

that we we we pipe that verification

data back into our training run. You

know how many other times we need and I

can tell you throwing more compute at

the problem is probably having yeah

super exponential gains right now per

iteration. So it depends on which domain

you're talking about, which modality.

There's no saturation in superconductor

discovery for example at all. The bitter

lesson is holding is well and alive.

>> I I totally get that. Can I ask you when

we look at the bottlenecks around

performance and progression today? What

are the bottlenecks that really persist

most significantly to you? Is it is it

algorithms? Is it data? Is it compute?

Can you help me understand which is most

lagging?

>> So there's four or five. It's um context

feedback which I'm happy to talk about.

It's compute.

There's capital which you need to like

you know continuously sort of deploy the

compute and context feedback loops. And

then there's culture and I think that

culture actually might be the most

important bottleneck of all time. But

those are the four I would say. Now

look, algorithmic innovation I think is

a function of culture basically because

if you have the right culture, you get

to attract the best researchers. The

best researchers, the best research

talent then wants to work on pushing the

frontier. And algorithmic innovation

just falls out of having a really good

team that's very flexible on what kind

of architecture they want to use. If you

have the right culture, the algorithmic

innovation bottleneck solves itself

because then the the researchers are not

focused or like tied to one architecture

versus another. They're not going, I'm

all in on LLMs or transformers versus

diffusion models. The best scientists

and researchers just want to solve the

problem, the mission. And if you have a

very missiondriven culture where they're

like, we want to move the frontier of

coding or the frontier of material

science, the algorithmic

stuff takes care of itself.

But so so I'm not that cons that's

actually not the bottleneck anymore in

my view. 2 three years ago that was a

huge bottleneck where we were trying to

figure out which algorithms scale is

there are there some limits to the

transformer architecture versus

diffusion models. And what I've come to

realize is if you solve the culture

problem you can solve the research and

the algorithmic problem. Then the

bottlenecks of context feedback which is

what is the data you need to keep doing

frontier research over and over again is

is is step number one. because actually

I think that is also where you have the

most business and commercial advantage.

I think there's lots of alpha and uh

value to be gained in pre-training,

mid-training and so on. But you know

that last mile where you you deploy a

model or an agent in some new domain and

then you collect feedback on how it's

performing in real time and then you

like I was saying here we do physical

verification of material science at

periodic um where wherever there are

some unique context feedback loops that

are that are missing today that's where

you probably have the biggest

bottlenecks on capabilities. And so what

you should be doing if you're trying to

advance the frontiers is going okay you

know these models suck. For example

about a year ago as an example I

realized there was a lot of talk about

models being good at physical physics

and chemistry AI for science and I was a

visiting scientist at the applied

physics department at Stanford and we

started benchmarking these models you

know claude Gemini and so on and

surprise they sucked. they were so bad.

I was like, there's there's there's this

disconnect between the marketing hype of

AI for science and the reality where

these models are terrible at the time at

least. They were starting to get good at

code, but they were terrible at

scientific analysis.

And you know, the conclusion was pretty

simple. They were just missing a lot of

the the physics and chemistry data you

need to reason about the physical world.

But to do that, we don't have enough of

that data on the internet because the

internet is mostly pre-trained data

about things like blogs and blah blah

blah and coding. But if you need physics

and science, that's a real bottleneck

cuz that data is locked up in national

labs and academic labs. It's locked up

in physical uh you know semiconductor

manufacturing plants. How do you get

that data in? That was the bottleneck I

realized was really the the critical

part of getting these models to reason

about the physics and science frontier

which is something I care about deeply.

And so the way we solved that at

periodic was you know set up a physical

lab with robots doing all that. You

could you could apply that same recipe

to whatever domain where you want to see

more and more progress. Then you ask

okay how much comput and infrastructure

do you need to keep that RL loop or the

physical verification loop scaling at

bigger and bigger scale. And then you

need the capital to fund all this. You

need equity, debt, a whole bunch of

different structured finance vehicles to

get, you know, land, power, shell. So

that's the compute bottleneck. And then

lastly is the culture. Cuz if you have

all of those three things, but you don't

have the right team and the right

missiondriven culture, the whole thing

falls apart. And and so those in my mind

are the four bottlenecks I wake up, you

know, every day trying to figure out how

we we unblock for the best teams. If we

just go through them, when we look at

that context feedback on the data side,

will we see then a generation of

vertically integrated foundation model

companies like periodic for a ton of

different things? Yeah.

>> Yeah. You know, when I went to grad

school uh for machine learning, I I I

went to Stanford for bioinformatics,

which was machine learning applied to

healthcare. We were the space was not as

good as marketing as it is today. So

super intelligence, love it. You know,

at the end of the day, what are we

talking about? We're talking about very

powerful models within some domain and

and we are seeing though sort of within

distribution very very powerful

capabilities that are you could

definitely call them superhuman because

there's no way for example I as a an

individual scientist could analyze the

reams and reams of data coming out of

the lab here without AI models there's

just no chance and so the fact that you

can take all of the data from you know

training from from a a physical lab and

just throw it at a bunch of AI models

and ask it to analyze things is a

superhuman capability. We didn't have

that before. Okay, fine. So, let's call

that super intelligence. Within coding,

within material science, within each of

these domain distributions, we are

seeing capabilities that are super

human. We didn't have them before. And

and and in fact, I would say we're even

starting to see automation of those

tasks, especially where there's there's

coding involved to starting to be

somewhat recursive, right? where if you

have a good coding model then you can

say okay let me automate like data

analysis let me automate like data

cleaning and so on some people would

call that recursive self-improvement

totally happening but it's it's not like

I can just say to a coding model please

bootstrap a a physical R&D lab for me in

Menllo Park get all the permitting go

you know go find an to raise money from

go set up the physical infrastru

structure and just like bootstrap all

this data. That's just an entirely

different kind of frontier and execution

and sort of problem.

>> My question to you then is like how do I

determine what is not going to get

claudified in that vertical model

company buildout because you could look

at a cursor and say well they've built

their own vertical model end to end and

it's been claified if we're being blunt.

periodic won't be because of the

physical data that's being produced in

the labs. How do I know what will be

cladified versus won't in that model

there?

>> Yeah, this is a good question. Okay, if

we want to sort of unlock frontier

progress generally across a bunch of

domains, then where are the bottlenecks

and where will the value acrew? Context

is

is not necessarily the moat. I would not

say yet. I I think I think venture

capitalists are very quick to analyze

modes but I would say context feedback

loops where you have you have unique and

differentiated access is where progress

will be most legible to you and if there

are other teams who don't have access to

that context it'll also be where you

have a superior business model and so

here's an example I give in the class

right sovereign data are you familiar

with the cloud act

>> yeah okay so the you know the the US

cloud act says that hey if there's

mission if if there's any data workloads

infrastru cloud workloads running on

infrastructure that is managed by an

American company then the US government

has to be able to access that data now

if you happen to be running military

defense mission critical workloads in

Europe on AI infrastructure that is

managed by an American company well that

context which is super critical can't be

sent over across the border

That's an example of a unique and

sensitive context that needs to be run

locally. And so if your ASML, your um

CMACGM that's doing logistics at scale

and some of this logistics is with

missionritical supplies, you can't have

your supply chain data being processed

by an AI bot that's running on servers

that is subject to the cloud act. So

what do you do? You look for local

infrastructure partners. you start

going, hey, who are the providers, AI

infrastructure providers in Europe that

we trust? Well, it turns out there

aren't that many who can actually handle

mission critical infrastructure at scale

for AI. So you call up someone called

Arthur Mench who is a French scientist

from DeepMind turned entrepreneur and

starts a lab called Mistral who is

running massive workloads and you say

Arthur would you actually build

infrastructure that can be secure

locally and that's why suddenly in July

of 202

at the at Vivate in Paris you have

President Mccron and Jensen standing on

stage next to Arthur, a 33-year-old

scientist unveiling a gigawatt AI

infrastructure facility in Paris. Why?

Because the context, the mission

critical context of those workloads is

so important to be run locally that you

can't run them on Amazon AWS, GCP or

Azure. And it's the first time in 15

years that the that the sort of

hyperscaler dominance is um up for grabs

for startups. With the greatest of

respect, is that the core investment

thesis of Mistral for you?

>> For me, yeah. Independence at scale of

at every part of the AI infrastructure

stack like land PowerShell in Europe,

that's sovereign, it's local, compute

infrastructure, that's local. And models

that are trained locally, by the way,

fully open, so they can be deployed and

customized globally wherever needed. But

certainly in Europe, like the full

independent stack is is the is the bet.

Yeah. Do

>> Anthropic and Open AI just accept that

and roll over? I I don't understand

because government is a mega portion of

their efforts and workload today and

like both of them when I speak to them

are like, "Oh, we're absolutely coming

for Europe."

So, so how do they get around that?

>> Well, I can't speak for OpenAI too much

uh cuz I'm not involved there directly,

but Anthropic, I will say, you know, the

mission and vision has always been very

um I think it's always been very

American aligned, right? They've always

said, "Hey, America is the crown jewel

of the world in terms of innovation.

This is where we're located." Anthropic

is located in Silicon Valley. Um, and I

think the company really, really wants

to do what's best for the American

government and the American way of life,

which is democracy and freedom. It turns

out the world's largest enterprise

customers are governments and Fortune

500 companies. And many of those that

are overseas need these workloads to be

running locally. you said about

obviously being involved with anthropics

since the earliest of days. I'm just

fascinated.

I think people kind of forget about

their early days almost. People talk

about like, oh, SPF investing early and

what a visionary he was,

>> right?

>> What was what was Anthropic and Dario

like in the early days?

>> Well, so I've known Tom forever. Uh Tom,

you know, was one of the the lead

authors on GP3.

Um we've been friends for many we'd been

friends for many years. Tom gave me a

call and said, "An you know, we for

various reasons, we want to leave and

start this new lab called Enthropic.

We're going to need uh a lot of capital.

We're going to need compute." I I had

already sold Ubiquity 6 at that point.

So, I'd kind of gone through the founder

journey. Um and so Dario, Tom and I

started doing these weekly sessions in

early 2021 to try to figure out how to

turn what was really a research

hypothesis, right, which is scale the

scaling recipe into a business

hypothesis. Um, and look, I would say it

it took like really 12 to 24 months. Um,

and they did a lot of the hard work on

figuring out how how do we really sort

of operationalize this the idea of this

AI pair programmer, right? where you

take the context feedback loop of the

local repository, the files, the

directories of programming and kind of

sort of in a in a very methodical way

make predictable progress on the

capabilities of um of of software

engineering.

And I I thought it was a very you know

if if anything my biggest flaw is as an

investor as a founder is being too early

to things. That that was my lesson with

ubiquity 6. I was early to the whole

computer vision which is now you know

obviously blowing up the whole

multimodal sort of generative modeling

space. Um and since then I have I think

updated my strategy on how to get timing

right. But at the time you know our our

the recipe was pretty simple right?

raise some money, buy some compute, get

a little bit of context data on

programming, put out a basic version of

the model, deploy it with with teams

that we trust who are doing coding, and

then pipe that feedback loop back into

the training run over and over again.

And when you do that with inference,

inference gives you sort of two things,

right? It gives you revenue to buy more

compute, and it gives you the context

feedback to keep improving the

capabilities curve. And I was like,

great, this makes total sense, guys.

let's go raise money. I invested a bunch

of my money uh that was just life

savings which was not much given I was a

poor founder at the time which where

most of my net worth was tied up in

Discord stock and it and and it pains me

sometimes to to look back at the emails

of friends. So I introduced them to 22

you know friends up and down Sand Hill

Road and so there's some investors there

and we got 21 nos, right? And I was like

what what are you guys thinking? And

they said, "Well, an this this recipe

sounds good in theory, but like where's

the proof?" And I said, "Proof? The

these are the guys who invented GPT3.

How much more proof do you want?" And

they said, "What's GPT3?" I was like,

"Oh my god." Like, how do you go about

educating somebody who doesn't even

understand the technology and the

breakthroughs that are happening in the

machine learning community? Now, I was

lucky cuz I I had that training from

grad school. I'd started a computer

vision company. So, something that was

super legible to me just was a

completely different world. And then for

those investors, we were pitching,

remember we we originally tried to go

out and raise 500 million and then had

to reanchor to only raising a hund00

million seed round, which at the time

felt like a lot, but of course was tiny

compared to how much OpenAI had raised,

cuz by then I think Opened a billion

dollars. And so the whole idea of

compute multipliers where we could for

every dollar of venture capital raised

produce a unit of of intelligence for

six times less was not like the VCs did

not understand it which is why you know

over the next 24 months the people who

got it were either people like you know

some of the folks in the ML community

who also had an overlap with the

effective altruist community like SPF

but also Amazon right this was very

legible to Amazon on because they were

watching what was happening with Azure

and OpenAI and they were like, well,

this is super aligned. If you guys

actually can create a bunch of

state-of-the-art models that are hosted

on Amazon, that's super accretive to to

the AWS business. And that's why, you

know, it resulted in deep compute and

capital for equity partnership with

Amazon that was originally $4 billion.

You know, a lot of this is public now,

but at the time it was it it was a

really tough journey. And I would give

Daario, Tom, the other co-founders, you

know, Daniela,

Jack, Sam Mcandlish,

um

like it Jared, Jared Kaplan, they were

it was such a brutal time getting this

company going. like

people don't is there anything you would

have advised them differently knowing

all that you know now

>> I'm not sure I would because the world

is a very different place today you know

and at the time it really did feel like

there was no one they could trust

>> is it not impossible not to be hauled up

in front of Congress if you reach a

certain scale

>> whether whether you're Google or whether

you're Facebook or whether you're

anthropic fighting against the pent

Pentagon it you get to a scale where it

is impossible not to have that conflict.

>> Oh absolutely. No. What are you talking

about? Look, I started AMP as a public

benefit corporation cuz I I think it's

actually a very aligned model. Have you

heard of REI, right? REI is a public

benefit corporation. They make billions

of dollars in revenue and profit. Have

they ever been held up in front of

Congress? No. Like Ben and Jerry's

public benefit corporation. Have they

been, you know, hauled up in front of

Cong? No. It's because they

self-modderated

right at a time and they said here's our

mission but we have to make we have to

build a business and as long as you hold

those two things in sort of those things

are not in conflict long term. If your

goal in life is long-term to push

humanity forward in some stable reliable

way, then you all there are always

tensions where you have your mission and

then you have your profit motive. And

you've got to be able to to like

moderate between those two. And I think

public benefit governance allows you to

do that. And I think we need more public

benefit charters in Silicon Valley and

in technology. And I think we will get

there. If you look at the arc of

infrastructure businesses, for example,

right? I I actually I actually had a

chat with a mutual friend of ours who

asked not to be revealed.

>> Okay.

>> Um and they said, "For [ __ ] sake, all

these PBC's, public benefit

corporations, will these startup

founders not just [ __ ] win their

market first?"

I mean, how are they feeling? Are they

investors in anthropic?

>> No.

>> Okay. So tell them to give me a call

when they'd like to be investors in the

world's fastest growing business of all

time. And then they can lecture me about

public benefit governance and market

share adoptions. Public benefit

governance gives the leadership the

ability to make decisions that sometimes

are not legible to shareholders as best

for them. What decision?

>> What decision can you foresee with AMP

that is aligned to your mission but does

not put the profit motive incentive

first? There are many up and down the

stack because we see ourselves as a full

stack scaling partner to the best

frontier technology teams and we also

kind of see ourselves a little bit as

have our job is to propose independent

standards for AI and as an institution

try to uh evangelize the adoption of

those standards through you know profit

generating businesses. We have a venture

capital business. We also have an

infrastructure business and a good

example of this for now is we're

actually giving away most of our compute

at cost. Now, if you're a shareholder,

you'd go, "Wait an billions of dollars

of compute infrastructure you're giving

away at cost."

Yes, because we think that's the right

thing for humanity. And we think that's

the right way long-term to have a

healthy independent ecosystem, which is

what our mission is. Our mission says

AMP is a public benefit holdings

company. Our our vision is is to ensure

there's a healthy independent frontier

technology ecosystem. Our mission is to

maximize the world's frontier output. to

do that long term. We think the teams

that are truly doing innovation like

truly doing pushing the frontier of

science and engineering need act compute

access and many of those teams today

can't afford to pay price gouging the

the the in extraordinary prices for

comput infrastructure today and so you

know what yeah we're happy to provide

them access of that in a way that's

mission aligned

>> an how do you secure the compute supply

maybe I should know this but it's the

most starved resource today how do you

secure a resource that no one else can

seemingly secure.

>> Well, step one is you get there first

before people realize how how valuable

it is. And uh luck, you know, I've been

um beating the the drum beat on this for

4 years now, right? I when I got to E16Z

as a general partner, the first thing I

did is I sat down with Mark and Ben and

said, "We need more compute. We need

compute access for these incubations I'm

going to do." And they said, "No

problem, An. Let's set up a program.

What do you So we used you know our

balance sheet to start procuring compute

through the oxygen program. That gave me

the ability to build pretty deep

relationships with the industry and

build trust with compute partners who

now we have lots and lots of

relationships with that we're scaling um

in ways that would be very hard if I

didn't have that time and the uh sort of

flexibility to understand that what is

required to really get that

infrastructure right. You know, we've

talked a little bit publicly about what

we're building, which is the AMP grid,

which is essentially a a a what what the

electricity grid did for electricity,

we're trying to do for compute

infrastructure. We see ourselves as an

independent system operator of the grid.

We we're not a cloud provider. We don't

own our own data centers. Uh we're not a

traditional venture capital firm either.

We see ourselves as an independent

system operator, which means our job is

to coordinate capacity across the

ecosystem in a way that allows the best

teams, the best independent teams to

provision for their base load, not their

peak. So they don't have to

overprovision but when they want to be

able to spike up and down for training

runs for inference needs they they feel

secure that the capacity exists. We are

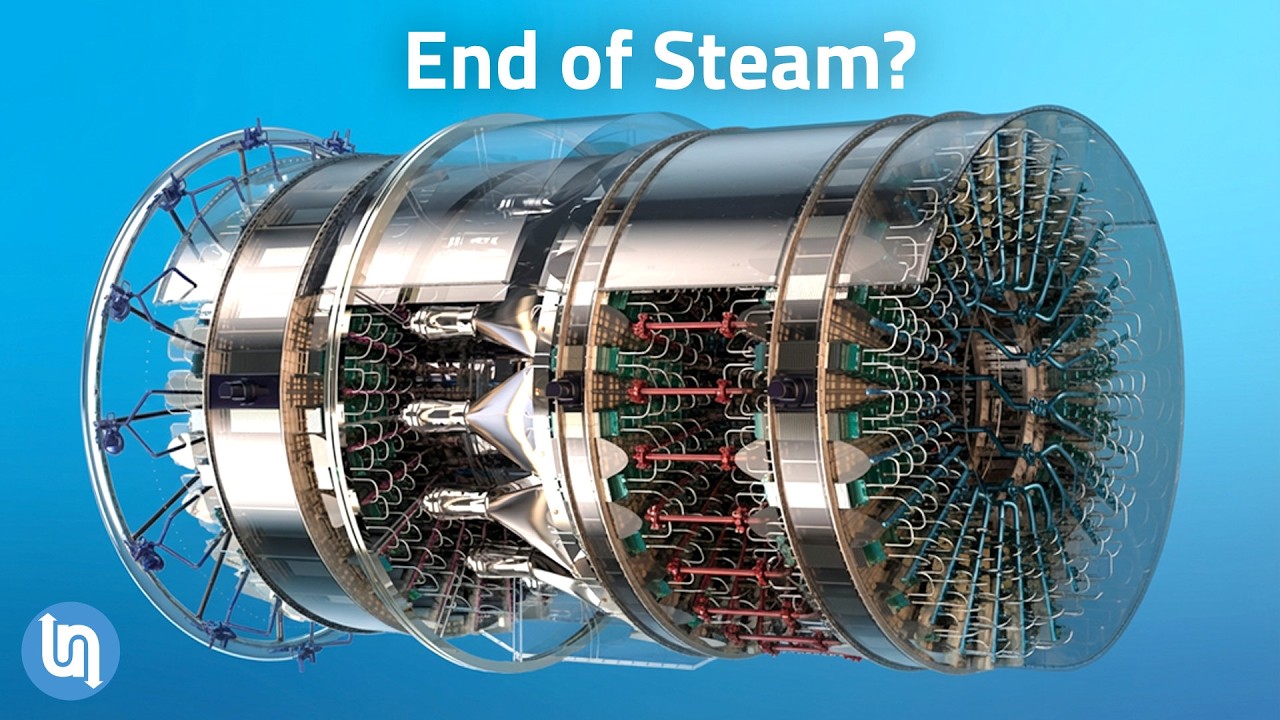

roughly in 1885 industrial you know

revolution England right now where you

have all you know these these frontier

labs are like factories that the steam

engine has been discovered. You can use

steam to produce all kinds of new

products and many of them are running

their own generators in their backyards

at half capacity. And I'm going, this

makes no sense. Let's all pull our

generators so that a shoe factory can

spike up during the day, a steel factory

can spike up during the night, and then

you maximize utilization um and

ultimately output. When you think about

allocating it, are you not using compute

and the cost of compute as a loss leader

for your venture fund business which

then comes in and says okay you name any

of your incredible businesses that you

own whether it's your anthropics or your

MLS or your Black Forest Labs and say

okay you'll get the compute at cost but

for that we need $300 million invested

and that's your way of winning. That's

that's not at all how we make the th

those are not that's not the deal. The

deal is

>> okay

>> we the deal is I incubate new companies

like periodic labs one at a time. That's

I can only do this one at a time because

I I like to team up with scientists or

engineers who at the forefront of their

field.

It takes a lot of work to create these

new companies from scratch. You know it

in many ways I had the privilege to to

realize that we are entering a back to

the future era of venture capital. If if

you think about the birth of modern

industries,

you know, let's talk about

semiconductors,

uh, gene editing, you know, the biotech

industry or, uh, self-driving cars,

Silicon Valley in the early days of the

founding of what I call these frontier

industries. The way you start the most

iconic companies is very different from

how fun companies were funded for the

last 10 years in the ZER era. Intel for

example, right, was a very close

partnership between a couple of

scientists and a investor called Arthur

Rock who was a founding investor and was

at the office every day. Arthur

literally used to Arthur wrote the stock

incentive plan. He used to run all hands

at the company every week. If you look

at Jenn which was incubated in the

basement of Kleiner right Bob um it was

co-founders were Herb Boyer professor at

UCSF and Bob Swanson who was an

associate at Kleiner and I I got to

apprentice in that mode of venture

capital because when I got to Kleiner

you know I was 20 I was wrapping up grad

school at at Stanford med school but I

was working nights and weekends um at

Kleiner on the investing team and Brooke

Buyers who was the KPCB&B had an office

next to me and he had some free time so

I would go to him and be like Brooke

you know, teach me your ways. And he

regailed me with all the stories of how

Genentech was being founded. And I was

like, wait. So, you're saying basically

Bob like co-founded Genentech here in

the basement at Kleiner. He's like,

yeah, we were that's what it meant to be

a partner. And I said, well, that's not

what happens here anymore. Like we write

a bunch of checks to SAS companies and

then they go off and do stuff. And he

was like different times. And if you

look at that,

>> are they mutually exclusive? And what I

mean by that is can you have a venture

ecosystem where you have a bunch of

people writing a bunch of checks as we

have done for the last 10 years and a

next generation or to your point a back

to the future era of venture capital

where you co-ound the business side by

side. Can they run side by side or are

we actually entering an era where we're

back to the future era as you say where

value acrruel is in the co-founding and

incubation side?

Um, I I think it's very hard for them to

coexist inside of one person.

And it's very hard to coexist sometimes

inside of even one firm because, you

know, there's a reason I'm sitting here

at Periodic Labs. I work here 3 days a

week. Every day from 8:00 a.m. to 8:30

a.m. for the last year, Liam Do and I

have had a standup every morning where

we go through the priorities of the

company and then we we make them, we

prioritize, we go and execute. I mean

the compute team at of AMP is sitting

upstairs procuring compute for for the

periodic guys. I my role models have

always been the Arthur rocks and the Bob

Swanson's and the Mike Mike Mara

personal computing effectively the first

CEO for the first year of Apple was Mike

Markel. He was an angel investor and he

was the one doing all the capex, you

know, supply chain and capital and all

of that stuff that allowed Steve and and

jobs and was to focus on the product and

the engineering and and that kind of

deep partnership is what I get really

excited about.

>> Can I go back to something you said

before which is like we're at the

industrial revolution stage and I was

like, okay, help me understand that. If

we're at the industrial revolution

stage, what does that mean for where

we're going and how I should be acting

as an investor today?

>> You have to hold two things in conflict

that can seem paradoxical. Um, and this

is this is the most important thing I

learned from Mark and Ben, which is when

the future the future is not uh is is

not determined. And so anyone who tells

you that they can predict the future

with certainty should be taken with a

healthy dose of

uh suspicion and and instead I try to

approach things like a scientist and go

what are the biggest bottlenecks let's

come up with a hypothesis on how these

bottlenecks will be solved and let let's

run multiple experiments in parallel and

then whichever one emerges you just have

to be very truth seeeking and and be

willing to claim like say you're wrong

right and and and I would say as an

investor your job is to come up with a

hypothesis for where the future is

and be willing to to to make multiple

different experiments that are aligned

with your mission in parallel and be

willing to be wrong and be honest with

your LPs that some of them may be wrong

honest. What do you what do you say to a

Brian Singerman of the world who always

said that I'm not smart enough to

predict the future but I my job is to

pick founders that are able to do so.

>> I think that the most the safest way to

predict the future is to invent it

right. So do the hard work. come up with

your point of view on if we're in

industrial revolution England, what

happened next and what were the emerging

properties of the businesses that became

valuable in institutions over the next

50 years after 1885 and then figure out

which part of that world which figure

from history of that era do you do you

look up to the most and what were you

know go read about their lives and the

businesses they ran and the and the

tensions that emerged in the practice of

their business later in life cuz then

they made mistakes when they were young

and try to learn from their mistakes and

then and then go and execute.

>> What's a parallel property direction

from 1885 onwards style time frame that

you think will play out in the next era?

>> Well, obviously in the world of

infrastructure, I think we need

something like the grid for in the

computer infrastructure. So that's what

I've spent most of my days on which is a

coordinating mechanism for uh that

allowed this the commod not the

commoditization necessarily but the

transition of uh coal and electricity

from being these resources that were

being hoarded to being stable reliable

uh commodities that that the best

engineering teams the best factories had

access to. Right? That so that's that's

what I think about a lot. I think if

you're since since you're so talented at

media and you're so talented at

storytelling um I think I would and and

your mission is to push the European

continent. I think one of the things if

I was you is I would be talk trying to

figure out how do we educate

the leading capital allocators and

infrastructure allocators in Europe

about the coming era whether that's

through media whether that's through

educational programs and get them to

understand their role in unblocking the

bottlenecks for the best scientists and

engineers in Europe

>> it's largely a lack of pension fund

reform in a lot of cases to be quite

honest

>> okay so spend your time on pension fund

reform

>> how much more

do we need in Europe for Frontier AI to

be what we think it can be? Is it like

2x? Is it 10x?

That's a good question. I I would try to

go about it from a top downs approach

and bottoms up sizing approach.

Um you know for us at AMP when I look at

the grid we are building out which is

sort of a reasoning by analogy. uh we

have started securing about 1.3 gawatts

of compute infrastructure that's roughly

$40 billion of cloud spend over the next

four years and that is financed roughly

you know between with about 20% of

equity the remaining is debt so 20%

that's about $10 billion of equity

capital the remaining is all debt

capital we have a bunch of partners that

help us put together these equity and

debt packages to secure computer

infrastructure for our companies I would

say in Europe

I would talk to Arthur and figure out

how much he thinks is required for the

independent ecosystem over there. But in

multiples of gigawatt like if if you're

doing sort of your atomic unit of math

in gigawatts I would from a from a top

down perspective

you know I think Google is roughly at 12

to 15 gawatt of that I'm aware aware of

of infrastructure for internal and

external deployed needs. Now they have a

huge land power shell pipeline coming

but you know I if Europe does not have

access to Google level infrastructure

then what are you guys even doing right

like that's roughly what the continent

needs for full sovereignty right to have

as at least as much infrastructure

locally as there is within the alphabet

holdings sort of pool

over the next four years

>> is the what's easier the equity raise or

the debt raise

>> I would say the biggest challenge has in

figuring out the right aligned financial

structure

across both in a way that's legible to

capital allocators at scale. Took me

about a year to really get all the

pieces right. But there are very large

equity pools.

Let me put this. a lot of balance

sheets, long-term missional aligned

balance sheets in the world who don't

who have um who are missional aligned at

wanting to help frontier scientists, re

researchers, university labs get access

to the comput they want, but they don't

have operex.

They don't have cash to spend on the

compute. So, if you can find a way to

align equity um debt, balance sheets in

a way that's risk sort of um derisked,

the fundraising is not a problem. It's

it's actually a systems design problem

which it took me again a year it

probably took me four years to get right

but now that we figured it out it's it

it's not been a problem.

>> Do you think we are underinvested still

today in data centers?

>> We are deeply underinvested in security

in secure compute. Okay let me put this.

We are not in an AI crisis. We are not

in an AI bubble for sure. I'll tell you

that which is the the the question I

keep getting asked. We are definitely in

a GPU wastage bubble where there are

stranded pockets of compute like

billions of dollars of compute that are

sitting unutilized and if we could pull

them together on a grid across the

independent ecosystem.

>> Why are they unutilized? Sorry.

>> For a couple of different reasons. Um

one is they're comput is not fungeible.

So unlike electricity which had to go

through a process of standardization you

know AC/DC where megawatts or megawatts

are megawatts computer is not funible

today. So for forget fungeibility of

compute across different manufacturers

like Nvidia and AMD within a

manufacturer

Nvidia chips for example the H100s the

GB200s the GB300s these are all

completely different chip types. So if

you have one cluster where you're doing

a training run on H100s and then you

want to sort of do continued post

training of that or or or have that do a

distributed training run of that um

training uh workload on GB200's

doesn't work. So they're just like

stranded pools of compute cuz flops are

the atomic unit of computation is flops.

I wish flops were fungeible but not all

flops are born equal today. And so if

you provisioned a cluster 2 3 years ago

with H100s and now you want to you

actually want to run some of those

workloads on for the newer generation

models, you're memory bound by H100

chips, you can't unlock, you know, the

the the benefits of the Blackwell chip

without basically just like buying a new

cluster. And so now suddenly you have

this H100 cluster

that you don't want to do training on

anymore because it's it's old school. it

doesn't like the chip doesn't have the

right memory memory properties to train

your frontier models and so and it's

very hard for any individual company to

h like see all of this stuff but when

you're on seven or eight boards like I

am and you've been doing this you know

15 years and you start to see patterns

emerge you're going wait a minute why is

there all this unutilized compute

sitting here and there

>> this is lof

are frontier models moving faster than

the pace of uh chips as you said that

with H100s where you you have newer and

newer models and then you're training

them on older and older chips because

that's what's free and it's not moving

in lock step. Is that is that the

problem that we're articulating?

>> No, no, no. The problem we're

articulating is that compute is not

funible. There are no standards for

fungibility and there are no

institutions enforcing standardization

of compute enough. So, we are in the

pre-standardization

era of compute today, which which was

the pre-standardization era of

electricity in 1885. And the next I I

hope we can we can self-regulate,

self-standardize

and self um enforce standardization so

that we can skip the boom and bust

cycles that happen with electricity over

the next 50 years. And this happens with

every infrastructure cycle in the

pre-standardization era. It happened

with electricity in 1885. It happened

with steel. It happened with railroads.

And every time you have this boom and

bust cycle, what happens is wars are

fought.

Companies backstab each other.

It's super painful. It's annoying. And

my view is that compute not being

funible is what's resulting in the all

this talk about AI, the AI bubble. But

what people forget is that we don't have

a AI capabilities bubble. The

capabilities are extraordinary in every

domain. We have an infra infrastructure

wastage crisis right now. And it's

because there are no open standards.

There's no open protocol for how flops

from one um data center can flow to

somebody else who needs it across chip

types across secure boundaries and uh

it's resulting in a lot of pain for the

ecosystem. People are just

>> if we have compute standardization in

the way that you said will we remove the

boom and bust cycle or is that just one

part of it? I think that will go a long

way in in preventing this and instead

just allowing this.

>> I'm sorry for asking. So, you're like,

"Jesus Christ, Harry, I'm a professor at

Stanford and you waste my time with

this." Which is a fair statement. Um,

British accent goes a long way though.

Um, what is the biggest bottleneck or

barrier to compute standardization that

you want to achieve?

>> Uh, it all goes back to alignment, man.

Misaligned incentives up and down the

stack. How is Silicon Valley and DC not

on the same alignment?

>> For one, I don't think we have

standardized on whether AI should be

regulated,

treated, procured as just as good

old-fashioned software or like a new

kind of system, you know, like I again I

went to grad school for machine learning

and what you learn in machine learning

101 is

models are statistical.

They're not deterministic, right? So

when you have a statistical system, it's

different from there are some properties

of a statistical system that are

different from a spreadsheet. A

spreadsheet is deterministic software

and a statistical model today is not.

And so should the procurement of a

spreadsheet be the same from an IT

perspective as a statistical model? Open

debate. That is the core debate. That's

the problem. Like AI alignment, don't

get me wrong, is hard but not the

hardest problem. Human misalignment,

human alignment is really is really the

problem right now we have in in the

world. We need technologists who are who

understand the difference between

deterministic software and statistical

systems to propose a set of standards

for how procurement for this should

work. And then we need standards people

in DC. We have this thing called NIST.

We have various bodies in the government

that should get together and say, "Thank

you guys for proposing this standard.

This is where it makes sense. This is

where it doesn't." This is called an RFC

process.

And we're going to standardize on this

definition of procurement. This is what

happened with TCP IP with the internet.

It happened with ACDC and electricity.

We have not done that yet for the model

era. And unfortunately, the difference

between st like these are called open

standards. The standardization process

is being confused with marketing. Now,

President Trump is actually, I think,

trying to do his best from what I can

tell in at least giving America enough

freedom to innovate that these standards

can even be discovered in our labs here

cuz first you need somebody to actually

pioneer and figure out what the

standards even should look like. I think

that

there's just a lot of noise. Do you

worry that basically the CCP is

subsidizing a generation of Chinese

models that are now being used by

American companies whereby they have

frontier models to essentially set where

model capabilities can be and then have

a real effort to make the open- source

Chinese models as close to those

benchmarks as possible much much

cheaper.

>> I mean the engineering execution right

now up and down the stack in China is

extreme. Here's what's happening right?

What they realized

is that the AI scaling race is not a

chip race. It's a full stack systems

code race where if you if you can't

compete head-to-head on chips for now,

what do you do? You compete on systems

design. You say, "Okay, we can't we

don't have leading edge chips here,

right, yet. So, let's try to compete on

systems." the you co-design the chip

that you have might be Huawei with the

computer infrastructure with the

training run and then you design that

okay to to have a bunch of performance

improvements at every layer of the stack

and then what you do is you do

adversarial distillation at scale where

you take western models and then you

from various different endpoints you

distill the the state-of-the-art and

then you try to get as many performance

gains as possible on that data and then

you release that back out to the world

as open models and then you see what

people react to and then you get

feedback and then you do the next run

and the next run and then you catch up

and at the point you catch up you say

wait a minute we're starting to be at

the frontier. Why do we need to open

source anymore? This is good enough for

our local domestic needs. It's

beautiful. It's actually and and and

that has actually by the way resulted in

innovation. They're they're innovating

at every step part of the cycle. And

that's why Huawei chips are able to

produce capabilities, improvements today

in China that rival some of the best

chips here when when integrated up and

down the stack. In a sense, it's the

Google strategy, right? Google is

integrated land power shell, TPUs, Borg,

Xborg, GQM, Gemini. Then the deployment

I mean the systems code design there up

and down results in efficiency that that

gives you huge performance gains at the

end of the day. China's replicated that

strategy using open source as sort of a

bootstrapping mechanism to catch up.

It's it's extraordinary.

>> Does that concern you?

>> Are you kidding? Absolutely. That's why

I think what we need is a western grid

that is where all inference frontier

inference is served through an iron

dome, right? where where if there's any

adversarial distillation attacks on any

one of our teams, we coordinate

together. So, because I'm on seven

boards, I I'm in group chats where I get

texted by one founder saying, "An is

anyone else noticing today that there's

a huge spike in distillation on from

this region and then I put them in a

group chat, we coordinate." It's very

informal right now, but what we need is

>> you said before that state sponsored

attacks on Frontier AI labs are getting

worse. What do we not know that we

should know?

Um, we should know that there are

insider threats.

We should know that there's distillation

happening across the US and Europe that

is taking advantage of our dist of of us

all not being united. They're that that

distillation is is taking advantage of

our political systems that our mission

critical infrastructure is is quite

vulnerable especially data centers that

are serving

uh workloads that are being used by

enterprises and I think that from a

business standpoint if we don't secure

frontier model inference or what I call

state-of-the-art inference behind a

coordinated Iron Dome we I don't think

we have a sustainable shot at at staying

at the frontier over the next decade.

>> I'm sorry. What does that mean? An iron

dome for inference in terms of

sustaining it.

>> It means that all inference is served,

no matter which company is serving it,

is served through a shared proxy that

can tell each other when there's an

attack happening on one part of the

frontier. Think of it as an iron dome

across the entire Western Front, right?

And just because you're here, you're in

one company,

you you you can't see that your model

being served through this other company

is being distilled. So it's it's a

deployment coordination protocol. It

it's it's basically my group chat that

I've got with like you know a bunch of

different founders but scaled where

people go we're seeing this attack today

and others go we are too. Let's

coordinate on defensive response.

>> I'm sorry for my lack of cohesion on

question. really I feel guilty and I

don't blame you for leaving this

interview thinking God he's got worse

over the 8 years not better but I was

watching this interview was speaking of

inference with someone I think from base

10 and they were saying that the demand

for inference has grown not linearly but

combinatorally and that is how we would

see it progress over the next 3 to 5

years do you agree with that

>> if we keep scaling capabilities that

will definitely happen the problem is

there are a couple bottlenecks on

scaling capabilities that are quite

existential. One of them we've talked

about is I mean the four core

bottlenecks on the capabilities progress

we've talked about right it's context

compute capital and culture and I think

capital allocation huge problem we got

to educate people on why this is why

these capabilities are extraordinary

like this this is like the biggest

financial bonanza of all time if you

know where to allocate I mean there's a

reason why I invest in anthropic in the

seed round and now as you've pointed out

like the returns of all the the body of

work I've done the last four years are

attracting LPS at the highest levels

But we're just getting started. And so

that that I I think some of these

projections you see are correct. If we

unblock the bottlenecks along the way in

computer infrastructure, secure compute

infrastructure that's funible, that's

standardized, that's the biggest

bottleneck. I think if there's any

reason why OpenAI, Enthropic, Gemini,

and so on don't hit their revenue

targets over the next few years, it's

because they won't have access to enough

compute. I will say there's there's like

a related bottleneck. When I was at

Stanford many years ago as a kid, I I

took this class that Peter taught called

uh I think it was turned into this book

called 0ero to1. This is Peter Teal. I

used to be I was an editor for the

Stanford Review and he had this um quote

right which is competition is for losers

and um

you know having done this now for 15

years I've kind of updated my theory of

business and I think he was he was not

wrong but he was insufficiently precise

which is that I think perfect

competition is for losers. I also think

monopolistic

>> what does that what does that mean

>> perfect competitions for these

>> it means that if you have 10 different

like 50 companies all doing LLM training

or doing coding models that's that's a

losing proposition it's it's like you

know perfect competition is like

restaurants there's no defensibility

that's why restaurants go out of

business all the time it's very hard for

them to differentiate on the other hand

in monopolistic comp monopolies are

mafias if once you have a monopoly at

one part of the stack they stop

innovating and instead they try to go up

or down by using the balance sheet to

acquire. They start hoarding resources.

They start saying, "You give me this and

I will force you to basically subsume

yourself to me." And I'm seeing that

kind of behavior up and down the stack.

And mafias are not good for innovation.

I I think we're in an era of op what we

need is optimal competition. The optimal

competition

setup is you have three or four teams in

every frontier that are making

extraordinary progress and so if you

invest in them you get extraordinary

returns but they're not so comfortable

as to be a monopoly such that they can

stop innovating and that's important

because when they stop innovating as

humanity we're [ __ ] And so I believe

that optimal competition we are living

we we need to transition to the optimal

competition in frontier technology and I

think we need leaders stewards venture

capitalists politicians educators to

remind the world that we have already

lived through this era of boom and bust

and so on and so these these companies

like what's going to happen right like

you said an banan and inference all

these companies inference is an

extraordinary growth curve ahead

But it's not going to be an

extraordinary growth curve if there are

50 inference companies all competing

with each other on a race to the bottom,

which is kind of what's happening right

now. Like it is not clear to me that we

need 50 inference companies. And it's

not clear to me that VCs are smart

enough to realize that they're just

lighting hundreds of millions of dollars

on fire in a category where having four

or five really good inference trusted

providers is net good.

But will the VC subsidization of 50 20

50 60 70 whatever companies it is not

make it impossible for the good

companies the four five to progress

through that cycle. It it's a bit of a

selfdestructive mechanism because if you

have 50 different companies all

competing for scarce compute resources

then the the folks who are actually

innovating don't have can't get it and

so they can't do their next round of

product innovation and so on. And that's

the problem when you have like this Is

that where we are now though?

>> That's where we are right now is the

best inference teams are calling me up.

Actually, all inference teams are

calling me up and saying, "And do you

have compute for us?" Cuz that's their

product is reselling compute. But it's

been hoarded. It's been hoarded by the

hyperscalers. It's been hoarded by

people who are not innovating but are

sitting on compute. And it's so obvious

to me now that I've left A6Z, I'm an

independent ecosystem public benefit

corporation that the that the

existential threat to innovation in this

category is lack of compute. Now that's

why AMP started procuring compute for

the independent ecosystem a while ago.

And so we are trying to find a way to

get these teams enough compute that they

need to keep innovating. But

>> we'll determine the four or five

inference companies that win versus the

others that don't.

>> Supply access to supply.

>> It's that simple.

>> Yep. Comput supply. If you don't have

compute, how do you do inference, man?

What are you selling? You need a product

to sell. So, if you're if you're making

a steam engine, you need coal. One of

your former partners tweeted last night

that we're going to enter a time where

only model I'm trying to remember it and

I wrote down parts of it, but only model

creators access the most powerful models

and that will power obviously the

services and the application layer or

the apps that they provide. Do you

believe that will be a world in which we

exist where model providers inherently

kind of safeguard the best models for

their provisioning of apps? Allah Claude

potentially or not? What Martine is

suggesting is that in competing cases

they will offer a worse model which

gives them an advantage. As an example,

11 Labs, which serves a huge amount of

application layer companies, will

reserve their latest models so they can

offer the best customer support and then

sell their older models to Sierra and

Decagon so they have a worse quality

model retaining the best for themselves.

The embedded assumption there right what

we have learned over the year like

empirically over the history of

technology is that you want if you have

a general purpose product like the

iPhone right that works for everybody

then the natural the natural incentive

is to amortize the cost of product

development of this over the largest

number of users. So if you have a

general model that's good for everybody

it will be available to everyone. If you

have specialized models that are good

for some people, there will be price

there will be product segmentation. And

I think what this is telling us is that

if there are many custom models, they

will some of them will be accessible,

others will not be. And so if anything,

I I think we should see the fact that

like there are Frontier Model Labs

saying, "Hey, here's a new model we

have. It only makes sense for some large

enterprises to access this as

vindication of the of the like ecosystem

truth that they're going to there's

going to be an ecosystem of different

models of different types. There's no

one large god model and uh if because if

there was I think there would be the

market desire to have you know prime

ministers, presidents and I and students

all use the same iPhone cuz inherently

you can raise the most money and invest

the most product budget dollars to for a

general product and amortize the cost of

that across everybody. But if you have

specialized models, yeah, I don't think

they're going to be accessible to

everybody and they don't need to be. I I

I I think this open and closed access

thing is somewhat overblown. I think

just empirically from a systems

perspective, if you look at the history

of technology, if you have general

products, they're they're they're

distributed to the masses. If you have

custom products, they have enterprise

segmentation. Some are accessible to the

enterprise, others are not.

>> Are there foundation model layer

companies that are yet to be built that

will be worth over hundred billion

dollars?

>> Oh, so many. I'm periodic is one I'm

sitting in one right here, right? But

they're not foundation model companies.

I would call them frontier systems

companies. This is the problem. Every

time I kept calling trying to educate

people, you know, four years ago where

they'd be like an but you know,

Anthropic is a foundation model company

and Mistral is a foundation model

company. No guys, that's just one part

of what they do. Maybe they're starting

there because that's very that's a core

competence

but there's a reason why you know

anthropic also has a thing called cloud

code and there's al there's a reason why

mistral has something called mistral

compute and there's something called

there's a there's a reason why you know

Microsoft who's a cloud also has a

co-pilot business you know these labels

or categories of foundation model when

need to be viewed I think with more

suspicion than they are like what

matters is the full the systems code

design the systems the the full stack

like like frontier research loop that

you need to run with customers and then

later when that happens when you say oh

my god anthropic

is now they have they have they were a

model company and now they're launching

a product called cloud code I was like

what do you mean that was part of the

plan all along of course you need to

have a a pair programmer interface for a

model like why why would you assume

otherwise oh cuz you just weren't paying

attention and you had your neat market

maps that your associates were giving

you and you thought that was That was

truth. The these

the commercial community has forgotten

how to build businesses and they've

forgotten the difference between first

principles and marketing.

That's the problem. That's one of the

other misalignment problems. The ground

truth of these businesses, machine

learning systems businesses, they've

always been frontier systems businesses.

They were never just foundation model

businesses. Now, okay, if you had to

package that up and tell your LPs that

because that was legible to them,

then I I can't blame you, I guess. But

the LPs I work with, I'm very upfront

with them. I say, "Look, these

categories are going through huge

reinventions and and and if you want

when you partner with me, what you get

is a full stack sort of partner." And I

will tell you the first principles of

what's going on and these first

principles insights will change over

time. But you got to be comfortable with

huge capex outlays in businesses that

end up winning the entire category.

That's what Frontier Technology is. So I

don't know I think foundation models

have been a deeply mis and and this is

part of why I started the class four

years ago. I just thought security at

scale was going through a bunch of

reinvention and then we reinvented the

class to be infrastructure at scale last

year and this year it's frontier systems

because not enough people realize that

to keep the the tech the capabilities

frontier moving you need to think about

these projects these companies as

frontier systems projects not foundation

model projects. Does that make sense? It

does. But when I hear about the capex

required, I I respectfully ask, do you

have enough money? I think the $1.3

billion was

>> Yeah. like how much money Yeah. How much

money do you need? An

>> well for the gigawatt 1.3 gawatt which

was kind of our our proof of concept

that that capital is not a problem. I

think the question is if we want to

scale beyond that,

>> yeah, we need way more capital to be

deployed in across the western front in

the United States and US allied

countries.

>> How much money do you think you need?

>> As long as the capabilities frontier

keep moving and we want a healthy

independent ecosystem, we'll just keep

raising more capital. There's no end to

that. I I don't I don't really The day

machine learning stops working as a

systematic way to give humanity more

capabilities, that's when I'll say we

have enough, Harry, but that's so far

out I don't even know how to reason

about that.

>> I could talk to you all day, but before

we do a quick fire, how will Vans be

fundamentally different in 5 years time

than it is today?

>> Well, again, go back to history, right?

I think there will be a few people like

Arthur Rock and

um

you know Bob Swanson and and Mike Mara

who turn their their practice into

institutions then there'll be others who

don't and I think if they don't evolve

themselves for what entrepreneurs of

this era need then I think they should

get out of the venture capital business

because we don't need more bankers like

you know one of the beautiful things I I

my friend Vlad who runs Robin Hood

floated did recently this like venture

fund thing on on Robin Hood.

>> Yeah. Venture Robin Hood Ventures I

think it is.

>> Yeah. Yeah. But like when you have

software that can play many of the

coordinating roles of venture capital

firms, why do you need somebody who's

just a pure to borrow a Marcism, a

rapper on LPs, right? The the look

here's here's what I'm most concerned

about with the capital ecosystem. Not

enough of the wealth creation

opportunity that's happening in Frontier

is being shared with the public and and

that's not good for anybody because

if you don't share this wealth creation

opportunity with the people who are

supposed to be welcoming this technology

into their lives which is ultimately the

public what are they going to do say I

don't want these

>> data with the with the greatest of

respect a lot of the money in venture

capital funds are from endowments

pension funds teachers funds and so that

wealth distribution should ultimately

trickle down if we believe in that.

>> But how many venture capital firms were

in the seed round of entropic?

>> Oh, none.

>> That's the answer for you. And that's

happening again and again and again.

There's a huge misallocation of public

capital into venture managers who did

are not capturing enough value in

Frontier AI. Instead, they're investing

a bunch of stuff that's not going to

exist and the public's going to be mad.

Did you put 300 million bucks into

Anthropic in one go?

>> I've had the privilege to invest many

hundreds of millions of dollars into

Anthropic across several rounds from the

first to the most recent one. So, I

consider that uh lucky. I I intend to

give most that away to public benefit um

causes, public benefit education

programs. And I I I I think we're at the

very beginning part of anthropics uh

journey on commercial progress. Dude,

I'm going to do a quick fire around with

you because otherwise I'm going to take

all day. You can advise, you can advise

an LP investing in venture funds. One

thing,

>> what do you advise them?

>> Educate yourself. Take the class. Do all

the readings. Do the readings. Do don't

skip the hard work. too too many LPs are

outsourcing their hard work, the the

work they're supposed to be doing as

capital allocators, which is like

understanding what's actually going on

and then decide which venture managers

and allocators you think have a unique

defensible advantage of the bottlenecks.

I I would be investing in the

bottlenecks basically.

>> Dude, too many too many GPS are not

doing the work. The amount of GPS who've

never built anything with AI is

astonishing.

>> I agree. Completely agreed. And I don't

think you can be like I don't you'll

laugh at me like I've built with every

different like vibe code provider. I'm

trying to turn my media company into an

AI first media company. It's pathetic

compared to the [ __ ] that you do. But at

least I'm trying. I'm seeing the

bottlenecks of superbase integrations

and everything that comes with it. And

you learn by building. I think if you're

not doing that in the be beginning, you

shouldn't be investing period.

>> I completely agree. I mean I was there

there's a sovereign country that came to

me at the end of last year and said we

want to bring 26 of our ministers to

your house and do a one-year program

where we educate it's a frontier program

where we learn what's going on in AI

from from lectures and so on and then we

want to do a deployment project where

each of our ministers actually build AI

agents and I said you know what that

that like if you take take Stanford

CS153 that it's a microcosm of this

course I'm doing with this country, the

sovereign fund that we partnered with.

Um and that's the way you you have to

work like do the work to read the

literature understand what's going on in

research and then deploy yourself like

build tools uh you know the class

project the Stanford CS153 class project

is the oneperson frontier lab because I

do believe genuinely that what would

have taken 50 people to do four years

ago now with the right AI tools you can

do with one person and as a leader if

you haven't played with these tools and

deployed yourself and built your own

agent I don't think you understand

what's going on. I'm not letting the the

ministers who are taking this class with

me, I'm not letting them graduate until

they build and deploy agents. I've told

them they're not getting they're not

getting their graduate certification.

>> Have you told your wife that you've got

26 ministers coming to your house?

>> She let me co-host

>> date night. An

>> she let me co-host them at our house in

SF, you know, few weeks ago. And I'm

very lucky to Viv. I don't deserve Viv,

I'll tell you that. But she's very very

she she's missional aligned and we both

believe that the best thing we could be

doing with our time is is educating at

scale.

>> What makes Dario so good that other

people don't see from the outside?

>> One sheer scientific brilliance truly

like world-class technical ability in

his domain. an obsessive

um desire for truth seeeking to

admit like to to to keep reasoning

reasoning reasoning doing to keep doing

experiments until he's he's a physicist

at heart right like I I think Dario is a

physicist at the end of the day he's not

actually a computer scientist um and so

a physicist a world-class physicist

tries to derive and and and he's an

applied physicist um derive laws,

general laws of reality by looking at

data and running empirical experiments.

He's an empiricist and he has an

obsessive desire to be a good

empiricist. And the third is mission

alignment culture. He says this is our

focus. This is our mission. No drift. We

won't take shortcuts.

We we are willing to make huge tradeoffs

to hit this mission. And that attracts

the best talent, incredible talent. In

the face of criticism of people saying

you're a mercenary, you're blah blah

blah. You're just doing this for profit.

No, actually, it turns out there's a

ruthless desire to to stay focused on

the mission. And that results in hard

trade-offs and priorities. And if you

don't if you're not aligned on that

mission, then you'll just think he's

crazy or, you know, he's evil or

whatever. It's crazy how much ad

hominemum attacks people I've seen

against him. But he's that got that

clarity of mission. What have you

changed your mind on in the last 12

months?

>> You know, the biggest one is um health.

Um I

I've had some health experiences between

both my family members and myself have

had health experiences that made me

realize we all just don't know how much

time we have on Earth.

And that makes you stop taking for

granted how much time we have. And so I

started taking time much more seriously.

But I would say and this was my my kind

of and you know every lecture I do at

Stanford um we talk a lot about scaling

laws and technical stuff but I also give

the kids like an Andre's life scaling