The 3 Levels of Context Engineering

Now Playing

The 3 Levels of Context Engineering

Transcript

139 segments

Most people treat coding agents like

chatbots. They go back and forth until

compaction ruins their flow. In this

video, I'll show you the three tiers of

context engineering and why the third

tier will change the way you code with

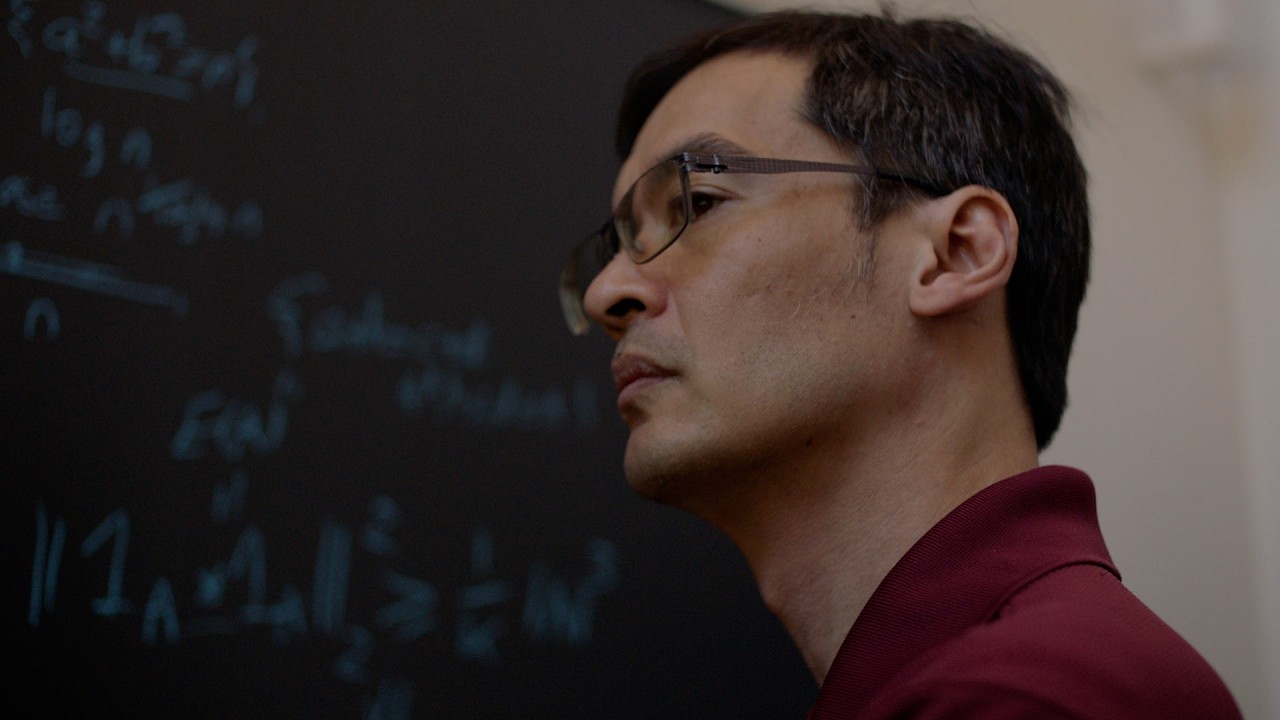

agents forever. I'm Roman. I published a

top 3% paper at Nurips, the largest AI

conference in the world. Now, I'm on a

mission to become the best AI coder.

There are three tiers of working with

context. 90% of users fall into the

first tier. They vibe code their

sessions, expressing their intent to the

agent, allowing compaction and

continuing as if nothing happens. This

causes severe context rot and

information loss, leading to

catastrophic performance decreases and

bugridden code. Since most people

learned AI through chat bots, this comes

naturally and they don't even know that

there is a better way. The second tier

is the intentional developer where 9% of

users fall. They understand that context

rod exists and that intentional context

engineering is the best way to work.

They frequently compact manually clear

context and curate handoff documents.

However, they still suffer from context

rot and struggle to get the models up to

speed with where they are. They still

treat conversations with coding agents

as linear.

Then there's the third tier, trajectory

engineers. After thousands of hours of

trial and error, they have internalized

the fact that conversations with LLMs

don't have to be linear. They frequently

use slashre to jump back in time with a

model, not just to try again, but to

keep context lean. They fork sessions

and parallelize different trajectories,

exploring many at once and picking the

best outcome. But why is trajectory

engineering possible with LLMs? Well,

LLMs have no internal state. Every

single time you hit enter, the model

processes your request from scratch,

just with your request appended to the

conversation array. The chat interface

just gives you the illusion of

continuity. Many people who know this

think of the statelessness of LLMs as a

limitation because you constantly have

to retach the model things. But this is

actually a superpower and allows you to

time travel through conversations with

models. Human memory degrades over time

due to its statefulness. Capture notes

are just a lossy snapshot of the place

you were mentally when you wrote them.

However, agent memory can be respond

with 100% fidelity. The state you load

implies the trajectory of what the

model's response will be. LLMs are

incredibly sensitive, meaning that small

perturbations in their input space,

their context, leads to possibly big

trajectory changes in their output

space. So, what does this really look

like in practice? Well, the trajectory

engineers are modern-day time travelers.

In Claude Code, this can be activated by

double pressing escape. You can jump

back in time to any previous point in a

session and explore different

trajectories. I like to think of context

as a tree. The tree has a trunk which

lays the foundation for the rest of it.

This trunk is analogous to your

important and reusable information.

Maybe context about your repo or the

plan you are implementing.

Traditionally, context will grow from

this trunk until the tree falls down,

aka the user hits clear. But why let the

tree grow to an unstable point when we

can keep trimming it down to the trunk?

Once you become a trajectory engineer,

you can intentionally create branches,

explore, and then trim the branches

back. You can gather more context on the

main branch, use that as recon, and then

trim back with slre. This allows you to

explore perturbations of different

magnitudes to the input space, letting

you explore what the model has to say

and refine your prompts details in order

to reach or approach the optimal

trajectory. The optimal output that a

model could have in your situation. I

personally use /re multiple times per

session, whether it be to try an

approach with a different prompt or just

trim session context down. Now, let me

motivate context trimming with an

example. If you are deep into a coding

session and your agent implements a bug,

what you can do is fix the bug by going

back and forth with the agent. Now that

the bug is fixed, instead of proceeding

with your work, you can trim out the

context related to fixing the bug as it

is no longer relevant. Time travel back

to before the bug was spotted. Let the

model know what happened and how you

fixed it and proceed from there. Once

you realize that you can respawn,

parallelize and jump back to any state

in a context window, this unlocks the

true skill ceiling of agentic coding and

the sky is the limit. Context

engineering in this way will widen the

gap between you and other AI users due

to the power and quality difference of

experiencing no context rot and

approaching the optimal trajectory.

That's why I'm calling these new age

time travelers trajectory engineers

because we aren't just engineering the

context or the prompts anymore. We are

engineering the output of a blackbox

system. If you want to learn how to

build your app or transform your

business by building with coding agents,

then join my free community. It is the

number one agentic coding community on

school. I'll see you in there.

Interactive Summary

Ask follow-up questions or revisit key timestamps.

This video introduces three tiers of context engineering for coding agents. The first tier, used by 90% of users, involves natural conversation leading to context rot and performance degradation. The second tier, for 9% of users, involves intentional context management like manual compaction and clearing, but still suffers from linear conversation flow. The third tier, 'trajectory engineers' (1%), understands that LLM conversations are not linear due to their stateless nature. They utilize features like 'slashre' to jump back in time, fork sessions, and explore different development paths, effectively treating context as a tree that can be pruned and branched. This 'time travel' capability, enabled by LLMs processing requests from scratch with appended context, allows for precise state management and exploration of optimal code trajectories, leading to significantly improved code quality and a wider skill gap between users.

Suggested questions

4 ready-made promptsRecently Distilled

Videos recently processed by our community