Vibe Coding With The New Codex macOS App

Now Playing

Vibe Coding With The New Codex macOS App

Transcript

546 segments

Hello everyone, and welcome back to another video.

In this video,

I'm gonna be vibe coding with the newly released Codex

macOS applications.

You can see here that I have a couple agents running here

in the left sidebar.

And what this is, is this is just Codex in an app,

and it gives you a little bit better UI,

but then there's also some key features like automations

that I wanna draw your attention to,

but we'll get into that in the video.

I'll share some of my thoughts,

and we'll also try it out and set up some automations and

set up some skills, and I'll show you guys how to do that.

But before we get started,

I do have a light goal of 150 likes on this video.

So if you guys haven't already liked the video,

make sure you do so.

Also make sure that you subscribe to BridgeMind and join

the fastest growing vibe coding community on the internet

right now, the BridgeMind Discord.

Check it out in the link in the description below.

I believe that we just surpassed 4,700 members.

And if you want the latest AI news,

latest vibe coding news,

the latest workflows that are coming out,

this is the fastest growing community right now.

So go check it out and join.

But with that being said, guys,

let's get right into the video.

All right, so right here, this one actually just finished.

So all I had it do was I said, hey,

review the BridgeMind UI and find any opportunities for us

to improve SEO in the project with better best practices.

And you can see here that it found these things here, okay?

And what's interesting is that you can do things like

clicking dismiss.

So for example, this one here,

like what happens when we click dismiss?

So literally you can click add and then dismiss.

And I'm curious as to what that actually does.

So this up here, if I click run,

tell Codex how to install the dependencies and start the

app.

So that's an important thing to know.

You can basically set up your environment to run inside of

Codex.

If you want to go through that process,

like if it's a very simple mono repo, you also can do that,

which is very, very helpful.

But what does this even do?

Like I click dismiss, I click add.

I'm not sure necessarily what this actually does is if I,

like what is this P2, P2?

So this is just, what even is this?

Do you guys see this?

So it's basically saying add or dismiss.

And it's in like this very unique card component.

And then it gives me summary of improvements.

So let's try, oh, it's a finding.

Okay, so do you guys see this?

So you can click dismiss.

And then now you can see that the findings went down.

Okay, so when you do add or dismiss,

it's gonna add to those findings.

So if I don't like this finding that it had,

I can basically dismiss it and it won't do it anything for

the followup changes.

But let's now go to the next prompt.

I now want you to focus in on these seven findings and

create updates to the code so that we actually improve our

SEO based off of your findings.

Update the code until you finish all seven findings and

improve them respectively.

Okay, so we'll just do that very simple prompt.

I'm not super worried.

It's very like not super complicated part of our code base.

It's just SEO, right?

So not super complicated.

What does this do here?

Codex automatically runs commands in a sandbox.

And then you can also, it has hold to dictate,

which you all can also do this one.

Allow Codex to use my microphone.

Hello, Codex, how are you doing?

So not better than whisper flow, but I mean, it's going to,

it's going to be useful for people that don't have whisper

flow or bridge voice, which is coming soon,

but let's go back over here.

So reviewed this one here.

So this was, um, this was any bad code or bugs.

So you can see these here.

This is interesting though, because for this one,

it didn't really give me the same findings.

It outputted it in a table, which I did ask it to do.

But if you notice on this one,

which I just had to do a couple of minutes ago,

it didn't give me those same findings.

So you can see with this one, it said, okay,

here are these seven things.

And now, you know, it's working on those, right?

But with this, it didn't give me those findings.

So it's interesting that it didn't behave like that.

But what I will say is that this is a completely different

user interface than what you would experience in like a

CLI, right?

If you were just using a codex in the terminal.

So let's now,

I want you to now review the code and implement updates so

that you fix these findings and fix these actionable issues

that you discovered.

So we'll fix these here.

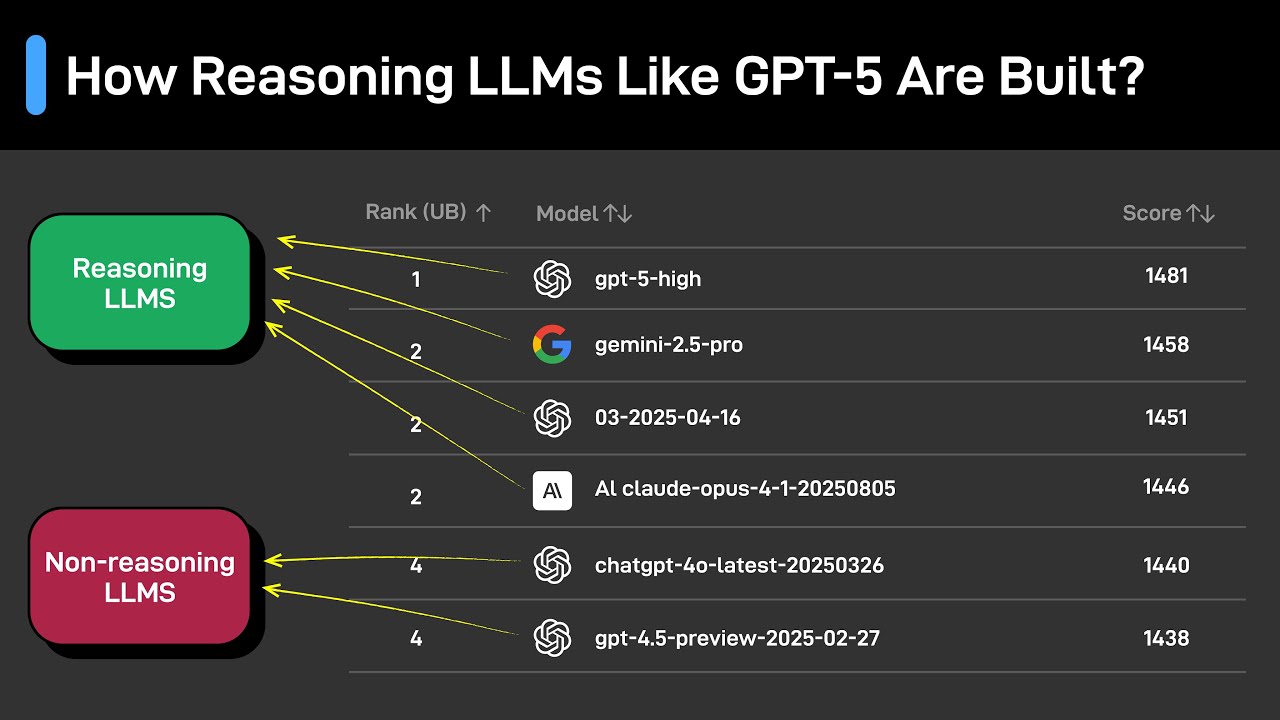

Let's put the reasoning on extra high because that is one

of the best models.

You know, codex 5.2 is a good model.

You know,

I think a lot of people are very like stuck on Opus 4.5,

which Opus 4.5 is also obviously the best model,

but do not sleep on this GPT 5.2 extra high model.

It is a very capable model.

One of the only issues with it is that it's just incredibly

slow.

So just be mindful that, Hey,

do not sleep on OpenAI or codex because it's actually

pretty good if you are using this highest model.

It's just expensive.

So with that being said,

let's let these two agents work so that we can kind of

practice with that.

And let's try a couple other things.

So toggle terminal, which is going to be command J,

but let's try toggling a terminal.

So this is just your basic terminal.

So we can see the interdirectories.

How many can I open?

Can I open multiple or okay?

It's literally just a single.

Can I do split terminals?

Okay.

Literally.

So you can only have one terminal.

So that's actually not that good.

You can only have one terminal inside of this.

So can we do command?

Yeah.

So literally only one terminal, which is not good.

But you do have terminals.

Also you can toggle the diff pain.

Okay.

So let's let that load in.

I do have a lot of changes that are made inside of this

project.

So when I initially opened up this project,

it just opened up to the bridge mind project, right?

Because that's where all my repositories actually lie.

So I have, you know, the birds mind API,

I have the birds mind UI.

I have the birds mind database.

I have all these projects.

So this is going to be the diff for all of these, um,

hide the file tree.

Let's hide it.

So that's okay.

Um, you can see the, the diffs.

I don't think that that's a little bit like,

I don't think that you necessarily really need to see

that.

Um, but in,

in terms of things that are a little bit more important,

this one here, automations,

this is a feature that you want to take note of because

this is different.

And this is something that you're not going to get

natively, uh,

in the Codex CLI that you are going to get with the Codex

app.

So for example,

like here are some examples that it gives us.

So automations automate work by setting up scheduled tasks,

scan recent commits since the last run or last 24 hours,

uh, for likely bugs in propose minimal fixes,

draft weekly release notes from merged PRs summarize

yesterday's get activity for a standup, uh,

create a small classic game with minimal scope.

So you have a lot of,

I don't know how this is an automation to click,

create a daily classical game.

Um, but I guess it is.

But for example,

like let's try creating a new automation and it even gives

us a placeholder, like check for century issues,

which is a very interesting use case because you could have

this set up, um, with, with, you know,

century to be able to check for century issues.

But one thing that I want to do is scan,

we'll do scan the bridge mind.

And can I add projects?

I can't, Oh, I select a folder.

Okay.

Scan the bridge mind API, uh, for security vulnerabilities.

Okay.

I do not know why I just typed that out,

but we can now choose a folder.

We can't choose a sub folder.

So once we're actually in the project, um, you know,

you can't choose a sub folder, which is interesting,

completely scan the bridge mind API for security

vulnerabilities.

Okay.

And then we can schedule this to run, like,

let's just test it out, right?

Like, let's see what happens when we do this.

Let's just test it for 2 52 PM,

because we're about to cross that threshold here,

and it's going to be set for daily.

And let's just create this.

Okay.

So this starts in two minutes daily at 1452.

So we'll see what this automation actually looks like.

But once you create an automation,

this is what it'll look like.

So all your automations go here,

and then we'll see what happens in two minutes with this

automation,

because basically what will happen is this automation I'm

assuming will run and it'll launch its own codecs window

and be able to run this automation,

which will scan the bird's mind API for security

vulnerabilities.

So that's really, really interesting.

That's not something that we have natively with the codec

CLI.

So this is like a new feature that you could potentially

use this app for that is different,

maybe worth getting the app for this purpose cannot set up

automations natively in codec CLI.

So another one is skills.

Now skills aren't anything new in codecs.

But skills, just having this UI is pretty nice.

So you have a spreadsheet skill, you have a Sora skill,

you have a century skill, which that's nice.

screenshot skill, image gen skill,

that's actually kind of interesting.

So let's install this skill here.

And what would be interesting is like,

could we make it so that we could create custom?

Like, could we create custom images for our website?

Let's actually try that out.

So let's go over to a new thread here.

I'm pretty sure you can just do at and then could I just

add the image gen skill?

Or how do I actually add that skill?

Let's do Yeah, so I don't see anything there.

So let's go back to skills.

And I think I can just do try.

And once I do try, what you're going to see is, okay,

generate or edit images for this task and re return the

final prompt for plus selected outputs.

Okay, just kind of dropped in a baseline prompt.

But let's do medium reasoning.

So it doesn't take too long.

I want you I want you to use the image gen skill.

And I want you to create a new section on the homepage of

the bridge mind UI website.

And in this section,

I want you to create a new infographic image and place it

in there about vibe coding,

it should be highly realistic and use real people,

but create a highly realistic image of people vibe coding

and create a new section underneath the hero in the bridge

mind UI website and import this generated image into this

section and update the code respectively.

Okay, so we'll see if this works.

I'm kind of putting this to the test to see, hey,

like a use case for this would be that would be useful if

you could use it.

If you could use image gen in like hand in hand with a

prompt that says, okay,

I also want you I want you to use image gen and I also want

you to use it to update and create this new section and use

you know, that's that's actually useful, right?

So I think that okay, here's the print opening API key.

Okay, interesting.

So it's printing my opening I key,

I may need to check through the video to see if it printed.

Okay, so that looks fine.

Let's check out this automation.

So this automation is now running.

So it looks like, oh, interesting.

Okay.

So this automation ran, it said,

I couldn't run a vulnerability scan,

because there's no API back end code in this workspace,

the bridge mind API directory is empty.

And a repo wide search didn't reveal any server sources,

the relevant path is went to a work tree,

what I need to proceed the correct location of the bridge

mind API code repo path.

Okay, so that's actually interesting, because it's like,

hey, when I set up this automation, I wasn't able to,

how do I manage my my Oh, geez, okay, unarchive.

Okay, good automations.

How do I?

Okay, let's go to a new Can I not manage?

Okay, here's my automation.

Like, here's a problem with automations.

I can't specify.

I can't specify, like, okay, go,

this is the bird's mind API,

there's no way to be able to add a particular project.

So you know, the bird's mind API is inside of this.

So maybe to run this automation,

you literally have to click Add new project,

and then we'll need to add, like, we'll go to desktop,

and then we'll go to bridge mind,

and then maybe we'll go to bridge a bridge mind API,

maybe that's the best practice here.

So let's go to bridge mind API and see what happens here.

So we'll go to this, this project,

and then we'll go to automations.

And then maybe we can add we can update this, this here.

And instead of it being in this project,

it can be particularly in this project,

and then we'll schedule it for let's try 56.

So it runs like right now, 56pm.

Okay, perfect save.

Okay, so is there a way to test this?

It literally has run here.

So let's just test this and see what happens.

So this is this running.

Okay, we'll see what happens there.

But what you guys can see that I just did is it wasn't able

to run successfully inside of that.

But it doesn't make sense because it literally said the

relevant path is that it did use a work tree.

So the codec tab does inherently use work trees,

which is an important thing to note.

Let's check out how this,

and this one's running right here.

So this is a scan.

Okay, so this is the one that we're testing scanning,

and it looks like this is now working.

So that was a workaround that did work.

So you can see users, codecs, automations,

scan the Burjman API for security vulnerabilities,

and that does look good.

So that's cool.

It has a memory MD file, automation.toml file.

So this is working well, and this is now going.

So that's perfect.

It's checking out my CSRF guards and middleware and my auth

services.

So that looks good.

This is now kicking off another one because this did just

hit 256 p.m.

So remember, I hit test, and then I also hit that.

So this is an applicatory use case that I can actually see

you using codecs for automations.

That's pretty interesting.

That is not something that we have inside of codecs

natively.

So that's really interesting.

Let's check out how these did.

This one said I'm ready to generate the image and wire it

into the homepage.

But the image tool requires an OpenAI key,

and it isn't set in this environment.

Please set OpenAI API key in your shell and tell me when

it's ready if you want a specific aspect ratio.

Okay.

So it looks like it can do it,

but I'm going to need an API key for it.

So using that skill, like, here's what I don't understand.

Why did that, like, using that skill not just, like,

configure, like, use my actual OpenAI account?

Like, if you look at this,

I don't think that I'm logged in to OpenAI.

Like this is just, I'm not even logged in,

which is a really interesting approach.

Like I just noticed that.

I didn't even see that before.

But if you guys look at this,

what you'll see is I'm not even logged into my OpenAI

account, which is really interesting.

This is just a, this is basically just a shell.

It's just an app.

I'm not logged in anywhere.

So it wasn't able to natively grab, you know,

anything from my plan.

It has me do an OpenAI API key,

which is kind of like a little bit like,

why would you do that?

I want to just use it inherently through my plan.

So I don't like that.

We're not going to try that out.

But it looks like if you did configure it with an OpenAI

API key, it would work.

So definitely take note of that.

But in general,

let's go over to personal and check out like what you can

do with this.

Nothing important here.

Configuration, I did see like nothing important there.

Personalization, you can add your own custom instructions.

MCP servers are right here and it gives you a nice UI for

that.

Your skills, again, right here,

same UI that we saw a second ago.

Here is Git.

You can add some commit instructions and pull request

instructions.

Here's your environments.

You can set up different environments.

Here are your work trees and then here's your archive

threads.

So pretty basic.

I mean, it just released yesterday,

so I'm not super impressed.

Here is what it found.

It found this here.

So let's see.

OK, great finding.

I now want you to fix this security vulnerability.

OK, so we will put that there.

But also like up here,

this is one thing that they kind of touted highly in their

their video.

So if we actually go out here,

what did they actually say on X?

They just literally just said introducing the Codex app,

a powerful command center for building with agents now

available on Mac OS.

So it seems to me like they didn't put like a ton of effort

into this.

Like there are some nice features.

Like I like automations, but I mean,

it's kind of a good thing, right?

That they didn't go overboard, but at the same time,

really,

the only like big difference or big thing that I'm getting

out of this is hey, automations could potentially be big,

like if you have some good use cases for automations like I

do with, you know, hey,

scanning your API for security vulnerabilities,

that would be useful or other scanning for century issues

and fixing them.

That would be useful.

Also just skills.

But you can set up skills natively in Codex as well,

can't you?

So I don't really see that they do offer kind of like a

chat GPT look with a being able to add these and use them

easily.

So that is different.

But then just in general,

it does just give you a better UI, right?

UX for you being able to use this.

So I will I will say not super impressed,

but definitely interesting.

Like automations is interesting.

I may use it if I want to set up automations because I

can't really set up automations in Codex.

Please somebody correct me if I'm wrong,

but I've never set up an automation Codex CLI before

because you would have to watch the instance, right?

But this obviously like this is different, right?

You can actually set these automations and look at this

one.

So this one just finished or that one.

What was that?

Okay, these all just finished.

Okay, cool.

This one did this one just like time out on me.

So this one I said I now want you to fix the vulnerability

and it just kind of timed out the model is not supported

when using Codex with the chat GBT account.

Okay, that's interesting.

Okay, I don't know why I did that.

So maybe a little bit buggy.

But in general,

automations is what I see as the biggest thing here.

Skills, obviously,

like just nice to have a UI for that and then being able to

manage your projects and your different agents rather than

like having, you know,

a warp interface like we normally do.

I'm definitely still more of a fan of the warp interface

because I think like getting locked into one model provider

is not the best.

Like when I'm using warp, I can launch, you know,

up to eight windows all at once and watch them like at the

same time.

And then I can also use codec CLI.

I can also use Opus or, you know, any cloud models.

But, you know,

I think the biggest thing that I'm seeing is these

automations.

That is interesting.

Like I would say that, hey,

if there's anything that you want to check out the codecs

Mac OS app for,

I would definitely check it out with these automations.

That's very, very interesting that you could, you know,

have one that's, you know,

paying attention to century logs or scanning your API for

vulnerabilities.

So I'm not going to use this a ton,

but I could potentially use it for automations.

That seems like something that would be nice to have so

that I have something running in the background and can

just set it up once to, you know,

run automatically every day or something.

So definitely interesting there, but all in all,

not super impressed.

You know, I think that with this, I mean,

it's just that you use your interface that's going to allow

you to use codecs,

like not super big for anybody that's like really serious.

But with that being said,

definitely suggest checking out if you think that you could

have a use case for automations.

I think that that's valid.

Also,

they were really hyping up the ability to be able to like

go in here, right?

And like do commits and stuff, right?

So being able to commit via UI, I mean, it's okay.

You know, I think that for the most part now,

I feel like people are just having AI do their commits and

manage Git for them.

So I don't think that this is like that big of a deal.

It's just a user interface for Git, but you know,

it's a nice user interface.

But all in all, I feel like that's really all it is.

There's no new underlying technology or features other than

automations over here.

Definitely would recommend checking that out.

So that's in my review of this new codecs application.

Definitely.

I would suggest checking it out just so that you're staying

tuned in with this,

just to make sure that you're on the cutting edge of these

technologies.

But with that being said, guys,

I'm going to wrap up the video here and I will see you guys

in the future.

Interactive Summary

Ask follow-up questions or revisit key timestamps.

The video provides a comprehensive review of the newly released Codex macOS application, exploring its user interface and standout features such as automations and skills. The presenter demonstrates how to set up scheduled background tasks, like scanning code for security vulnerabilities, and discusses the strengths and weaknesses of the GPT 5.2 extra high model. While the presenter highlights the convenience of the UI and the unique value of the automation system, he also points out current bugs and the manual requirement for API keys in certain integrated skills.

Suggested questions

5 ready-made promptsRecently Distilled

Videos recently processed by our community