AI Mass Surveilance and Weaponry Situation

Now Playing

AI Mass Surveilance and Weaponry Situation

Transcript

253 segments

Here's something I've been glued to that

I think more people need to be made

aware of because it's very serious.

Right now, there is a slapboxing match

that's been going back and forth between

Pete Hegz and Anthropic. And really,

it's the entire Pentagon versus

Anthropic. Uh they're behind Claude,

which I'm sure many of you familiar

with. The military wants unrestricted

access to Anthropic's AI and they have a

contract in place and right now

Anthropic is planting its feet in lat

spreading refusing to be bullied into

giving them unrestricted access. The CEO

of one of the biggest AI companies in

the world is meeting with Defense

Secretary Pete Hegsth today as the

Pentagon threatens to essentially

blacklist that company, Anthropic, from

lucrative government contracts if the AI

company doesn't lift its restrictions on

how the military can use its technology.

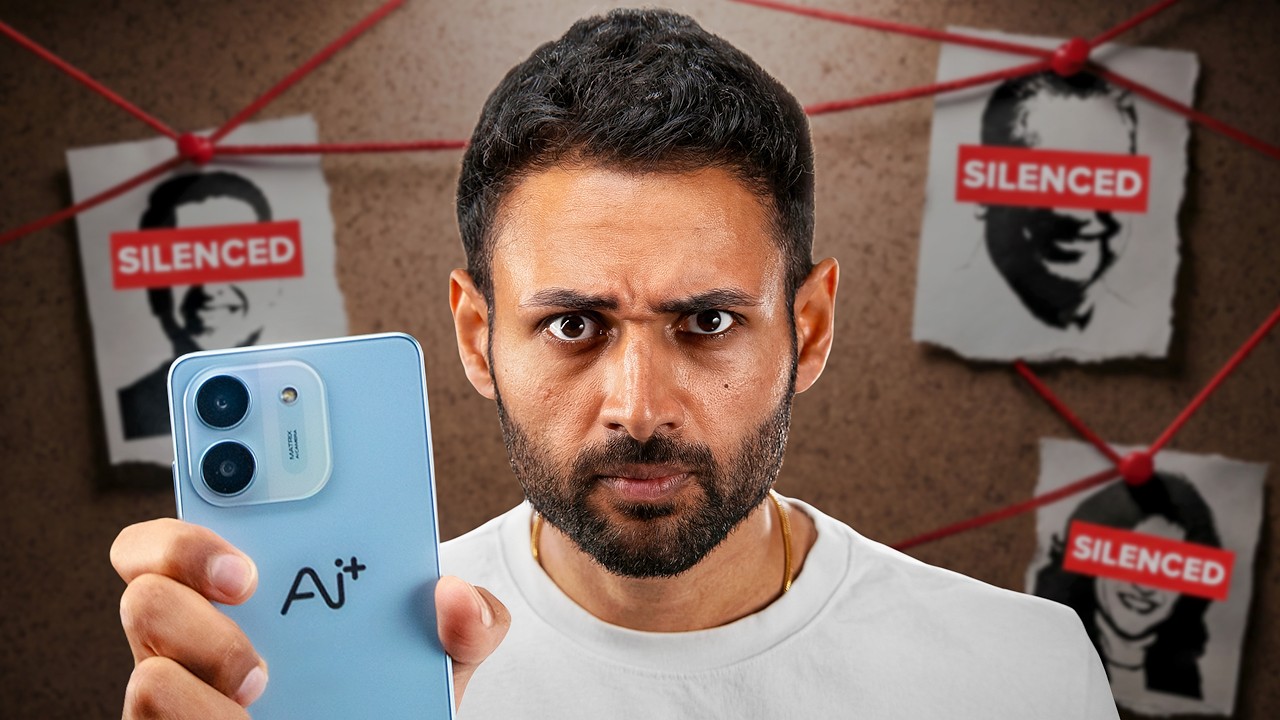

The Pentagon has a $200 million contract

with Anthropic. And a source tells CNN

that the company has concerns over two

issues. AI controlled weapons and mass

domestic surveillance of American

citizens.

>> Sounds pretty reasonable to me. Yeah,

that one passes the smell test. Those

are very legitimate concerns. And

Anthropic wants these restrictions in

place to ensure that its AI can't be

used for mass surveillance of American

citizens or autonomous AI weapons. And

because of their reluctance to concede

on that, Pete Hegth has been stomping

his feet, bench pressing 3:15, and has

given them a deadline till Friday to

play ball with them or risk being

blacklisted. And the reality of the

situation is even if Anthropic doesn't

budge and they wipe their ass with the

contracts,

numerous other AI companies will step up

and just gladly take the bukaki from the

Pentagon with completely unlimited

access, no restrictions to their AI. But

right now, Anthropic is the most

powerful and the one that they really

want, which is why they're trying so

hard to get them to release these

restrictions and let them use it for

whatever they want, which again, the

main things from Anthropic that they are

very concerned about is the mass

surveillance of American citizens and AI

autonomous weaponry. Again, I think

those are very reasonable restrictions

to keep in place to ensure that the AI

can't be used for those two things

because it shouldn't be. If

anyone here has ever watched Terminator

or any sci-fi movie where AI assumes

direct control of the military and

everything goes tits up, you've seen

this exact story line play out in those

montages that give you the lore

breakdown for how we got there. Like,

it's crazy to see it unfolding now in

real time in the real world. Obviously

exaggerating a bit, not quite to that

level yet, but it is extremely serious

and I think any reasonable person would

agree with these kind of restrictions

because AI shouldn't be used for mass

surveillance on American citizens. And

under no circumstances should physical

attacks be determined by AI with no

human input whatsoever. It shouldn't be

making targeting decisions without human

input. Like fully autonomous AI weaponry

is a terrible idea. And actually

the CEO of Anthropic did an interview

going over these two things because he's

not backing down on them.

>> That's one reason why I'm, you know, I'm

worried about the, you know, the the the

autonomous drone swarm, right? So, you

know, the constitutional protections in

our military structures depend on the

idea that there are humans who would, we

hope, disobey illegal orders. With fully

autonomous weapons, we don't necessarily

have those protections. Now, for what

it's worth, I'm no fan of Anthropic.

There is no AI mega corporation you'll

catch me waving the number one foam

finger around for and advocating for and

glazing or anything like that. I think

all of these companies are greedy as

controlled by shady vampire ghoul

creatures. And I know Anthropic has

built up somewhat of a reputation for

being the good guys in AI, but I am not

one of the people that actually believe

that at all. But he's 100% right. Fully

autonomous weaponry cannot disobey an

order, even an illegal one. It will

always follow through no matter what,

unquestionably. And that's not a good

thing. That's not a positive thing. I'm

sure a lot of you have probably seen a

lot of those like YouTube videos or like

a long time ago the Reddit story that

would circulate like once a year for the

big updes talking about the man who

potentially saved the world. Stannis Lav

Petrov who was an officer during the

Cold War era and while on duty he

received a ping from the early warning

system alerting him to incoming United

States missiles. He believed that it

could have been a false alarm. So he

decided to go against protocol and

instead of reporting the incoming

missiles, he waited. And it turns out

his hunch was correct. It was a

malfunction in the early warning system.

But the standard protocol dictates that

he was to report these incoming

missiles, which could have led to a

retaliation from the Soviets, which

would have led to a retaliation from the

US and could have been a nuclear

disaster. There's been a lot of debate

about whether or not that would have

even happened had he reported the

missiles because there would have been

other checks that would have went into

place potentially, but with such high

tension and such little time with

incoming missiles, there's a lot of

speculation that yes, had he reported

it, they could have immediately just

decided to retaliatory strike the US.

Regardless, what is irrefutable is that

he made the right decision by disobeying

protocol, disobeying like the order of

operations here, by not reporting those

missiles. He made sure that there wasn't

a nuclear disaster. It was the right

call. The point I'm making is the

ability to disobey an order or not

follow through on protocol is something

that's important. And there's tons of

examples of it. I just chose this

because I think it's the one some of you

have probably heard of. the so there's

no benefit to having fully autonomous AI

weaponry that can't disobey anything and

has to follow through on everything

without question update these these

protections appropriately so you know

think about the fourth amendment it is

not illegal to you know put cameras

around everywhere in public space and

you know record every convers it's a

public space you don't have a right to

privacy in a public space but but today

the the government couldn't record that

all and make sense of it with AI I the

ability to transcribe speech to look

through it, correlate it all. You could

say, "Oh, there's this, you know, this

person is a member of the opposition.

This person is expressing this view and

and make a map of all, you know, 100

million." And so, are you going to make

a mockery of the fourth amendment by by

the technology finding kind of technical

ways around it?

>> He then goes on to say that maybe we

need to like update a lot of these

protections to encompass things like AI,

finding workarounds for it. And the

point he is making is that this kind of

implementation of AI could very much

make a mockery of the fourth amendment

flushing it down this His point

is even though it's not illegal to have

cameras in public all over the place out

the wazoo till the cows come home

without AI they can't like piece

together everything comb through

everything make a map of people that's

you know opposition stuff like that but

with AI they can. mass surveillance is

made much more possible. It would

circumvent protections of things like

the Fourth Amendment. He's right. So, he

is not backing down on these things,

which is what's causing so much friction

with Pete Hegathth and the Pentagon,

which I really feel like any sensible

person should see and be extremely

concerned because I think these are

reasonable restrictions. Now,

unfortunately, like I said, even if

Anthropic does stick to their guns here

and it does cost them this contract, it

doesn't just die there. It doesn't

fizzle out. Open AAI and XAI have made

it pretty clear they're willing to just

with a wide open mouth just take the

golden shower. Let them use it for

things like mass surveillance or

autonomous AI weaponry. Like they

they're totally fine with completely

unrestricted access which had my jaw on

the floor because XAI is Elon Musk

company. You're telling me Elon Musk

would be okay with mass surveillance?

No, not that guy. No, I'd eat my left

shoe. I don't believe that for even a

second. Uh-uh. Maybe he just Maybe he

just doesn't know. Yeah, that's probably

what it is. Anyway, though, I I do think

this is something everyone should care

about. I I feel like the restrictions

are reasonable and the military should

have no qualms about not being able to

be used for mass surveillance on

American citizens or completely

autonomous AI weaponry.

Maybe that's a hot take. Maybe I'm on

the crackpipe, but I think those

restrictions are totally fair and it's

kind of alarming that they're making

such a huge stink about it and going to

like outright saying they're going to uh

blacklist Anthropic should they not lift

those limitations.

Don't think that's a good thing. It

makes it seem like they want to use it

to spy on every American citizen and

they want to use it for autonomous AI

weaponry. Those aren't good things.

That's that's how that's how it's

looking here. So yeah, just wanted to

yap about this a bit. That's it. See

you.

Interactive Summary

Ask follow-up questions or revisit key timestamps.

There is a serious ongoing dispute between the Pentagon and AI company Anthropic. The Pentagon, holding a $200 million contract, demands unrestricted access to Anthropic's AI technology, threatening to blacklist the company if it refuses. Anthropic is resisting, primarily due to concerns about the AI's potential use for mass domestic surveillance of American citizens and as autonomous AI weapons. The speaker supports Anthropic's stance, arguing these are reasonable restrictions essential for constitutional protections and human discretion in military actions. Other AI companies like OpenAI and XAI are reportedly willing to provide unrestricted access, making the Pentagon's aggressive demands particularly alarming and suggesting a desire to use AI for these contentious purposes.

Suggested questions

6 ready-made promptsRecently Distilled

Videos recently processed by our community